diff --git a/README.md b/README.md

index 2bd8e8dc9a..edd8893277 100644

--- a/README.md

+++ b/README.md

@@ -22,9 +22,13 @@

## 🚀 News

-* [2025-08] ✨ Trinity-RFT v0.2.1 is released with enhanced features for Agentic RL and Async RL.

+

* [2025-08] 🎵 We introduce [CHORD](https://github.com/modelscope/Trinity-RFT/tree/main/examples/mix_chord), a dynamic integration of SFT and RL for enhanced LLM fine-tuning ([paper](https://arxiv.org/pdf/2508.11408)).

-* [2025-08] We now support training on general multi-step workflows! Please check out examples for [ALFWorld](./docs/sphinx_doc/source/tutorial/example_step_wise.md) and [ReAct](./docs/sphinx_doc/source/tutorial/example_react.md).

+* [2025-08] ✨ Trinity-RFT v0.2.1 is released! Enhanced features include:

+ * Agentic RL: support training with general multi-step agentic workflows; check out the [ALFWorld](./docs/sphinx_doc/source/tutorial/example_step_wise.md) and [ReAct](./docs/sphinx_doc/source/tutorial/example_react.md) examples.

+ * Rollout-Training scheduling: introduce Scheduler, [Synchronizer](./docs/sphinx_doc/source/tutorial/synchronizer.html) and priority queue buffer, which facilitates more efficient and dynamic scheduling of the RFT process.

+ * [A benchmark tool](./benchmark) for quick verification and experimentation.

+ * RL algorithms: implement [GSPO](https://github.com/modelscope/Trinity-RFT/pull/154), [AsymRE](https://github.com/modelscope/Trinity-RFT/pull/187), [TOPR, CISPO](https://github.com/modelscope/Trinity-RFT/pull/185), [RAFT](https://github.com/modelscope/Trinity-RFT/pull/174).

* [2025-07] Trinity-RFT v0.2.0 is released.

* [2025-07] We update the [technical report](https://arxiv.org/abs/2505.17826) (arXiv v2) with new features, examples, and experiments.

* [2025-06] Trinity-RFT v0.1.1 is released.

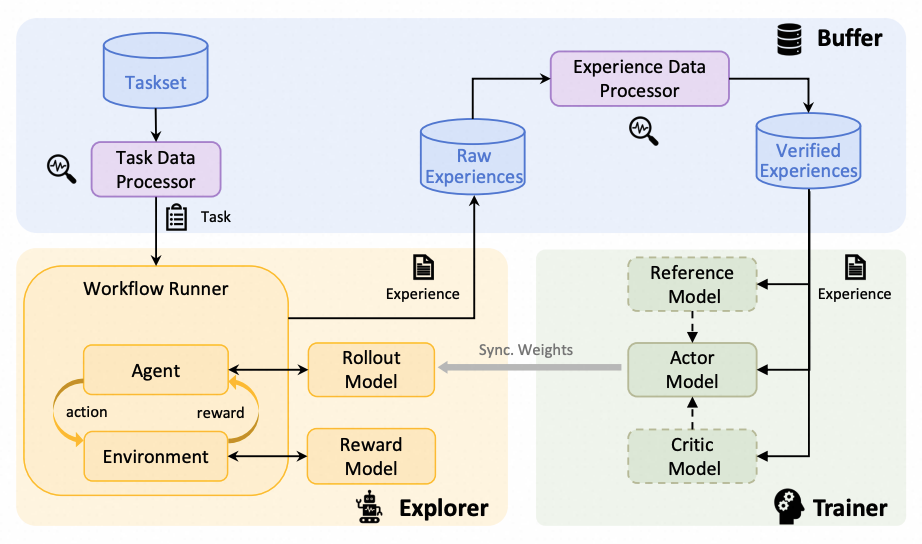

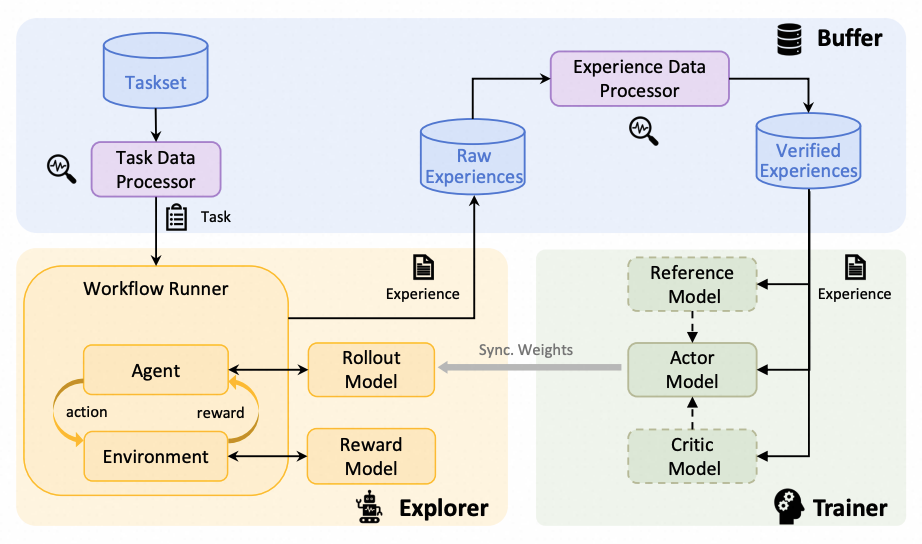

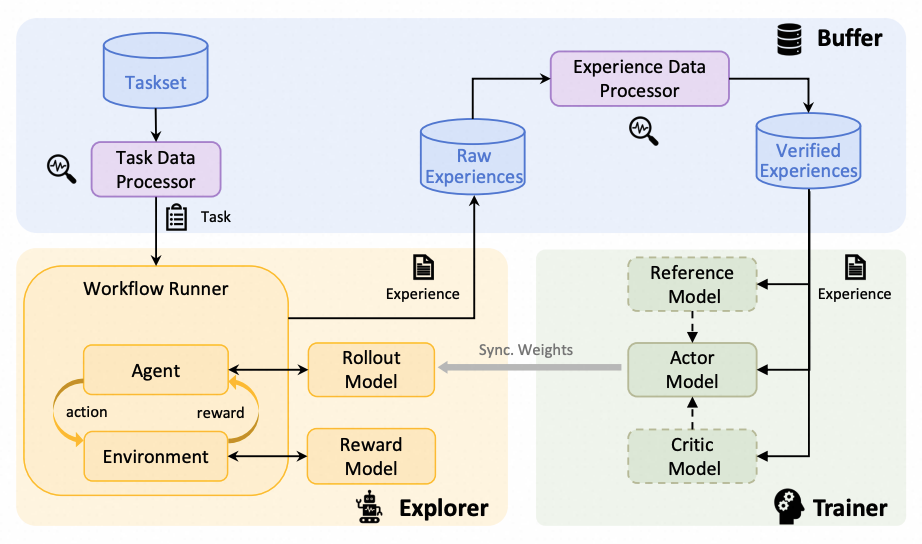

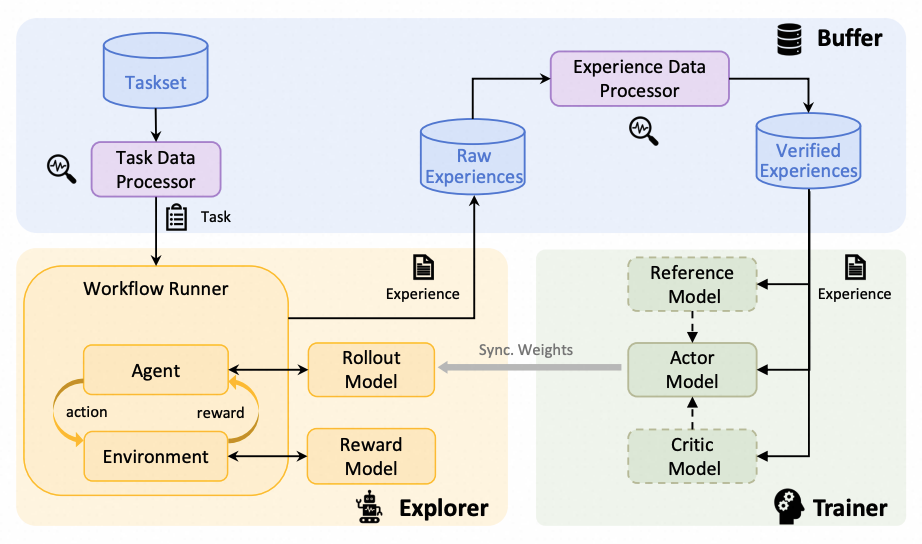

@@ -45,11 +49,11 @@ It is designed to support diverse application scenarios and serve as a unified p

* **Unified RFT Core:**

- Supports *synchronous/asynchronous*, *on-policy/off-policy*, and *online/offline* training. Rollout and training can run separately and scale independently on different devices.

+ Supports synchronous/asynchronous, on-policy/off-policy, and online/offline training. Rollout and training can run separately and scale independently on different devices.

* **First-Class Agent-Environment Interaction:**

- Handles lagged feedback, long-tailed latencies, and agent/env failures gracefully. Supports multi-turn agent-env interaction.

+ Handles lagged feedback, long-tailed latencies, and agent/env failures gracefully. Supports general multi-step agent-env interaction.

* **Optimized Data Pipelines:**

@@ -71,7 +75,7 @@ It is designed to support diverse application scenarios and serve as a unified p

-  +

+

@@ -123,12 +127,13 @@ It is designed to support diverse application scenarios and serve as a unified p

* **Adaptation to New Scenarios:**

- Implement agent-environment interaction logic in a single `Workflow` or `MultiTurnWorkflow` class. ([Example](./docs/sphinx_doc/source/tutorial/example_multi_turn.md))

+ Implement agent-environment interaction logic in a single workflow class ([Example](./docs/sphinx_doc/source/tutorial/example_multi_turn.md)),

+ or import existing workflows from agent frameworks like AgentScope ([Example](./docs/sphinx_doc/source/tutorial/example_react.md)).

* **RL Algorithm Development:**

- Develop custom RL algorithms (loss design, sampling, data processing) in compact, plug-and-play classes. ([Example](./docs/sphinx_doc/source/tutorial/example_mix_algo.md))

+ Develop custom RL algorithms (loss design, sampling strategy, data processing) in compact, plug-and-play classes ([Example](./docs/sphinx_doc/source/tutorial/example_mix_algo.md)).

* **Low-Code Usage:**

@@ -341,14 +346,11 @@ Tutorials for running different RFT modes:

+ [Offline learning by DPO or SFT](./docs/sphinx_doc/source/tutorial/example_dpo.md)

-Tutorials for adapting Trinity-RFT to a new multi-turn agentic scenario:

-

-+ [Concatenated Multi-turn tasks](./docs/sphinx_doc/source/tutorial/example_multi_turn.md)

-

-Tutorials for adapting Trinity-RFT to a general multi-step agentic scenario:

+Tutorials for adapting Trinity-RFT to multi-step agentic scenarios:

-+ [General Multi-Step tasks](./docs/sphinx_doc/source/tutorial/example_step_wise.md)

-+ [ReAct agent tasks](./docs/sphinx_doc/source/tutorial/example_react.md)

++ [Concatenated multi-turn workflow](./docs/sphinx_doc/source/tutorial/example_multi_turn.md)

++ [General multi-step workflow](./docs/sphinx_doc/source/tutorial/example_step_wise.md)

++ [ReAct workflow with an agent framework](./docs/sphinx_doc/source/tutorial/example_react.md)

Tutorials for data-related functionalities:

@@ -361,15 +363,17 @@ Tutorials for RL algorithm development/research with Trinity-RFT:

+ [RL algorithm development with Trinity-RFT](./docs/sphinx_doc/source/tutorial/example_mix_algo.md)

-Guidelines for full configurations: see [this document](./docs/sphinx_doc/source/tutorial/trinity_configs.md)

+Guidelines for full configurations:

+

++ See [this document](./docs/sphinx_doc/source/tutorial/trinity_configs.md)

Guidelines for developers and researchers:

+ [Build new RL scenarios](./docs/sphinx_doc/source/tutorial/trinity_programming_guide.md#workflows-for-rl-environment-developers)

+ [Implement new RL algorithms](./docs/sphinx_doc/source/tutorial/trinity_programming_guide.md#algorithms-for-rl-algorithm-developers)

-

-

++ [Develop new data operators](./docs/sphinx_doc/source/tutorial/trinity_programming_guide.html#operators-for-data-developers)

++ [Understand the coordination between explorer and trainer](./docs/sphinx_doc/source/tutorial/synchronizer.html)

diff --git a/README_zh.md b/README_zh.md

index 7960de4555..2538bf6398 100644

--- a/README_zh.md

+++ b/README_zh.md

@@ -22,9 +22,12 @@

## 🚀 最新动态

-* [2025-08] ✨ 发布 Trinity-RFT v0.2.1 版本,强化了 Agentic RL 和 异步 RL 相关功能。

* [2025-08] 🎵 我们推出了 [CHORD](https://github.com/modelscope/Trinity-RFT/tree/main/examples/mix_chord),一种动态整合 SFT 和 RL 来微调 LLM 的方法([论文](https://arxiv.org/pdf/2508.11408))。

-* [2025-08] Trinity-RFT 现在已经支持通用多轮工作流的训练了,请参考 [ALFWorld](./docs/sphinx_doc/source/tutorial/example_step_wise.md) 和 [ReAct](./docs/sphinx_doc/source/tutorial/example_react.md) 的例子!

+* [2025-08] ✨ 发布 Trinity-RFT v0.2.1 版本!新增功能包括:

+ * 智能体 RL:支持通用多轮工作流的训练;请参考 [ALFWorld](./docs/sphinx_doc/source/tutorial/example_step_wise.md) 和 [ReAct](./docs/sphinx_doc/source/tutorial/example_react.md) 例子。

+ * Rollout-Training 调度: 通过引入 Scheduler, [Synchronizer](./docs/sphinx_doc/source/tutorial/synchronizer.html) 以及优先队列类型 Buffer, 支持 RFT 流程中更高效与灵活的调度。

+ * [Benchmark 工具](./benchmark),用于快速验证与实验。

+ * RL 算法:实现 [GSPO](https://github.com/modelscope/Trinity-RFT/pull/154), [AsymRE](https://github.com/modelscope/Trinity-RFT/pull/187), [TOPR, CISPO](https://github.com/modelscope/Trinity-RFT/pull/185), [RAFT](https://github.com/modelscope/Trinity-RFT/pull/174) 等算法。

* [2025-07] 发布 Trinity-RFT v0.2.0 版本,新增了多项功能优化。

* [2025-07] 更新了[技术报告](https://arxiv.org/abs/2505.17826) (arXiv v2),增加了新功能、示例和实验。

* [2025-06] 发布 Trinity-RFT v0.1.1 版本,修复了已知问题并提升系统稳定性。

@@ -45,7 +48,7 @@ Trinity-RFT是一个通用、灵活且易于使用的大语言模型强化微调

* **统一的 RFT 内核:**

- 灵活应对*同步/异步*(synchronous/asynchronous)、*同策略/异策略*(on-policy/off-policy)和*在线/离线*(online/offline)等多样化训练模式,经验数据的产生(rollout)和训练(training)可独立部署在不同设备并实现分布式扩展。

+ 灵活应对同步/异步(synchronous/asynchronous)、同策略/异策略(on-policy/off-policy)和在线/离线(online/offline)等多样化训练模式,经验数据的产生(rollout)和训练(training)可独立部署在不同设备并实现分布式扩展。

* **一流的智能体-环境交互:**

@@ -71,7 +74,7 @@ Trinity-RFT是一个通用、灵活且易于使用的大语言模型强化微调

-  +

+

@@ -123,12 +126,13 @@ Trinity-RFT是一个通用、灵活且易于使用的大语言模型强化微调

* **快速构建新场景:**

- 通过编写基础交互逻辑配置即可构建新场景,只需在 `Workflow` 或 `MultiTurnWorkflow` 类中定义智能体与环境的互动规则。([查看示例](./docs/sphinx_doc/source/tutorial/example_multi_turn.md))

+ 通过编写基础交互逻辑配置即可构建新场景,只需在 workflow 类中定义智能体与环境的互动规则 ([查看示例](./docs/sphinx_doc/source/tutorial/example_multi_turn.md)),

+ 或者直接调用智能体框架(比如 AgentScope)中已有的智能体工作流 ([查看示例](./docs/sphinx_doc/source/tutorial/example_react.md))。

* **灵活开发算法模块:**

- 在轻量级算法模块中开发强化学习算法,包括了损失函数设计、数据采样与数据处理等核心环节,模块支持自由组合,便于快速迭代实验。([查看示例](./docs/sphinx_doc/source/tutorial/example_mix_algo.md))

+ 在轻量级算法模块中开发强化学习算法,包括损失函数设计、数据采样与数据处理等核心环节,模块支持自由组合,便于快速迭代实验。([查看示例](./docs/sphinx_doc/source/tutorial/example_mix_algo.md))

* **可视化操作体验:**

@@ -343,18 +347,14 @@ trinity run --config examples/grpo_gsm8k/gsm8k.yaml

将 Trinity-RFT 适配到新的多轮智能体场景的教程:

-+ [多轮任务](./docs/sphinx_doc/source/tutorial/example_multi_turn.md)

-

-

-将 Trinity-RFT 适配到通用多轮智能体场景的教程:

-

++ [拼接多轮任务](./docs/sphinx_doc/source/tutorial/example_multi_turn.md)

+ [通用多轮任务](./docs/sphinx_doc/source/tutorial/example_step_wise.md)

-+ [ReAct智能体任务](./docs/sphinx_doc/source/tutorial/example_react.md)

++ [调用智能体框架中的 ReAct 工作流](./docs/sphinx_doc/source/tutorial/example_react.md)

数据相关功能的教程:

-+ [高级数据处理及Human-in-the-loop](./docs/sphinx_doc/source/tutorial/example_data_functionalities.md)

++ [高级数据处理及 Human-in-the-loop](./docs/sphinx_doc/source/tutorial/example_data_functionalities.md)

使用 Trinity-RFT 进行 RL 算法开发/研究的教程:

@@ -362,14 +362,17 @@ trinity run --config examples/grpo_gsm8k/gsm8k.yaml

+ [使用 Trinity-RFT 进行 RL 算法开发](./docs/sphinx_doc/source/tutorial/example_mix_algo.md)

-完整配置指南:请参阅[此文档](./docs/sphinx_doc/source/tutorial/trinity_configs.md)

+完整配置指南:

+

++ 请参阅[此文档](./docs/sphinx_doc/source/tutorial/trinity_configs.md)

面向开发者和研究人员的指南:

+ [构建新的 RL 场景](./docs/sphinx_doc/source/tutorial/trinity_programming_guide.md#workflows-for-rl-environment-developers)

+ [实现新的 RL 算法](./docs/sphinx_doc/source/tutorial/trinity_programming_guide.md#algorithms-for-rl-algorithm-developers)

-

++ [开发新的数据处理操作](./docs/sphinx_doc/source/tutorial/trinity_programming_guide.html#operators-for-data-developers)

++ [理解 explorer-trainer 调度逻辑](./docs/sphinx_doc/source/tutorial/synchronizer.html)

diff --git a/docs/sphinx_doc/assets/trinity-architecture.png b/docs/sphinx_doc/assets/trinity-architecture.png

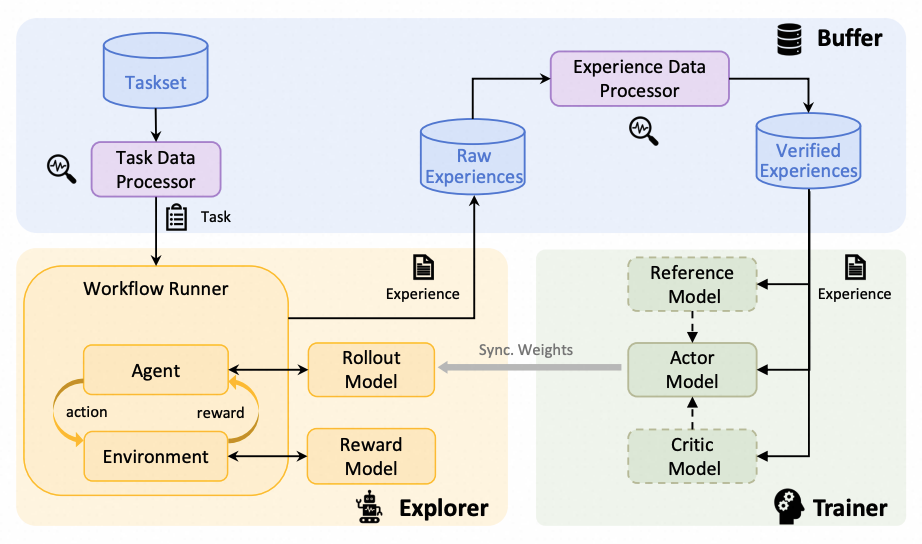

index e44e8e9c1c..ba5901b001 100644

Binary files a/docs/sphinx_doc/assets/trinity-architecture.png and b/docs/sphinx_doc/assets/trinity-architecture.png differ

diff --git a/docs/sphinx_doc/source/main.md b/docs/sphinx_doc/source/main.md

index f83e9a812d..19efd88050 100644

--- a/docs/sphinx_doc/source/main.md

+++ b/docs/sphinx_doc/source/main.md

@@ -8,9 +8,12 @@

## 🚀 News

-* [2025-08] ✨ Trinity-RFT v0.2.1 is released with enhanced features for Agentic RL and Async RL.

* [2025-08] 🎵 We introduce [CHORD](https://github.com/modelscope/Trinity-RFT/tree/main/examples/mix_chord), a dynamic integration of SFT and RL for enhanced LLM fine-tuning ([paper](https://arxiv.org/pdf/2508.11408)).

-* [2025-08] We now support training on general multi-step workflows! Please check out examples for [ALFWorld](./docs/sphinx_doc/source/tutorial/example_step_wise.md) and [ReAct](./docs/sphinx_doc/source/tutorial/example_react.md).

+* [2025-08] ✨ Trinity-RFT v0.2.1 is released! Enhanced features include:

+ * Agentic RL: support training with general multi-step agentic workflows; check out the [ALFWorld](./docs/sphinx_doc/source/tutorial/example_step_wise.md) and [ReAct](./docs/sphinx_doc/source/tutorial/example_react.md) examples.

+ * Rollout-Training scheduling: introduce Scheduler, [Synchronizer](./docs/sphinx_doc/source/tutorial/synchronizer.html) and priority queue buffer, which facilitates more efficient and dynamic scheduling of the RFT process.

+ * [A benchmark tool](./benchmark) for quick verification and experimentation.

+ * RL algorithms: implement [GSPO](https://github.com/modelscope/Trinity-RFT/pull/154), [AsymRE](https://github.com/modelscope/Trinity-RFT/pull/187), [TOPR, CISPO](https://github.com/modelscope/Trinity-RFT/pull/185), [RAFT](https://github.com/modelscope/Trinity-RFT/pull/174).

* [2025-07] Trinity-RFT v0.2.0 is released.

* [2025-07] We update the [technical report](https://arxiv.org/abs/2505.17826) (arXiv v2) with new features, examples, and experiments.

* [2025-06] Trinity-RFT v0.1.1 is released.

@@ -31,11 +34,12 @@ It is designed to support diverse application scenarios and serve as a unified p

* **Unified RFT Core:**

- Supports *synchronous/asynchronous*, *on-policy/off-policy*, and *online/offline* training. Rollout and training can run separately and scale independently on different devices.

+ Supports synchronous/asynchronous, on-policy/off-policy, and online/offline training. Rollout and training can run separately and scale independently on different devices.

* **First-Class Agent-Environment Interaction:**

- Handles lagged feedback, long-tailed latencies, and agent/env failures gracefully. Supports multi-turn agent-env interaction.

+ Handles lagged feedback, long-tailed latencies, and agent/env failures gracefully. Supports general multi-step agent-env interaction.

+

* **Optimized Data Pipelines:**

@@ -101,12 +105,13 @@ It is designed to support diverse application scenarios and serve as a unified p

* **Adaptation to New Scenarios:**

- Implement agent-environment interaction logic in a single `Workflow` or `MultiTurnWorkflow` class. ([Example](/tutorial/example_multi_turn.md))

+ Implement agent-environment interaction logic in a single workflow class ([Example](/tutorial/example_multi_turn.md)),

+ or import existing workflows from agent frameworks like AgentScope ([Example](/tutorial/example_react.md)).

* **RL Algorithm Development:**

- Develop custom RL algorithms (loss design, sampling, data processing) in compact, plug-and-play classes. ([Example](/tutorial/example_mix_algo.md))

+ Develop custom RL algorithms (loss design, sampling strategy, data processing) in compact, plug-and-play classes ([Example](/tutorial/example_mix_algo.md)).

* **Low-Code Usage:**

@@ -318,14 +323,11 @@ Tutorials for running different RFT modes:

+ [Offline learning by DPO or SFT](/tutorial/example_dpo.md)

-Tutorials for adapting Trinity-RFT to a new multi-turn agentic scenario:

-

-+ [Concatenated Multi-turn tasks](./docs/sphinx_doc/source/tutorial/example_multi_turn.md)

+Tutorials for adapting Trinity-RFT to multi-step agentic scenarios:

-Tutorials for adapting Trinity-RFT to a general multi-step agentic scenario:

-

-+ [General Multi-Step tasks](./docs/sphinx_doc/source/tutorial/example_step_wise.md)

-+ [ReAct agent tasks](./docs/sphinx_doc/source/tutorial/example_react.md)

++ [Concatenated multi-turn workflow](/tutorial/example_multi_turn.md)

++ [General multi-step workflow](/tutorial/example_step_wise.md)

++ [ReAct workflow with an agent framework](/tutorial/example_react.md)

Tutorials for data-related functionalities:

@@ -338,13 +340,17 @@ Tutorials for RL algorithm development/research with Trinity-RFT:

+ [RL algorithm development with Trinity-RFT](/tutorial/example_mix_algo.md)

-Guidelines for full configurations: see [this document](/tutorial/trinity_configs.md)

+Guidelines for full configurations:

+

++ See [this document](/tutorial/trinity_configs.md)

Guidelines for developers and researchers:

+ {ref}`Build new RL scenarios `

+ {ref}`Implement new RL algorithms `

++ [Develop new data operators](/tutorial/trinity_programming_guide.html#operators-for-data-developers)

++ [Understand the coordination between explorer and trainer](/tutorial/synchronizer.html)

For some frequently asked questions, see [FAQ](/tutorial/faq.md).

+

+

+

+

+

+