diff --git a/docs/source/en/model_doc/m2m_100.md b/docs/source/en/model_doc/m2m_100.md

index 449e06ec30c29b..d64545fafb0612 100644

--- a/docs/source/en/model_doc/m2m_100.md

+++ b/docs/source/en/model_doc/m2m_100.md

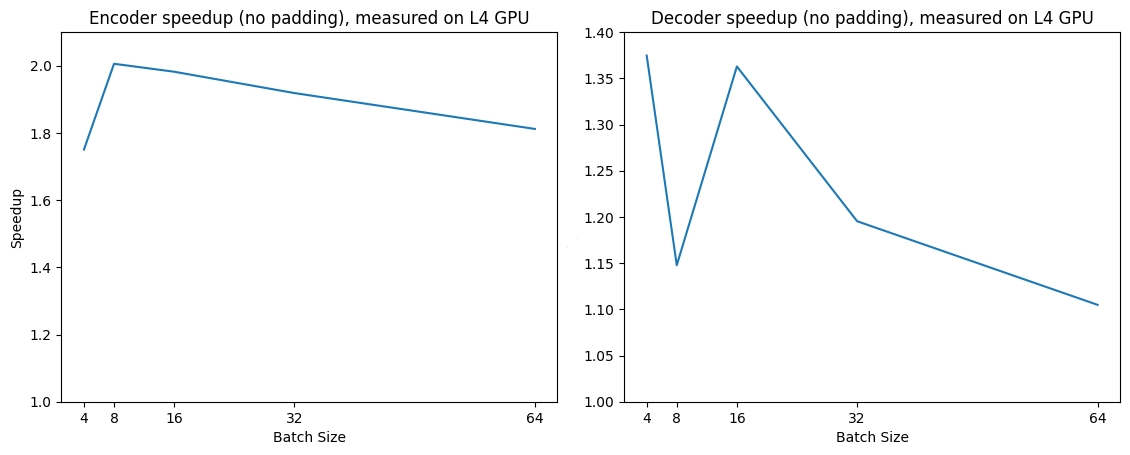

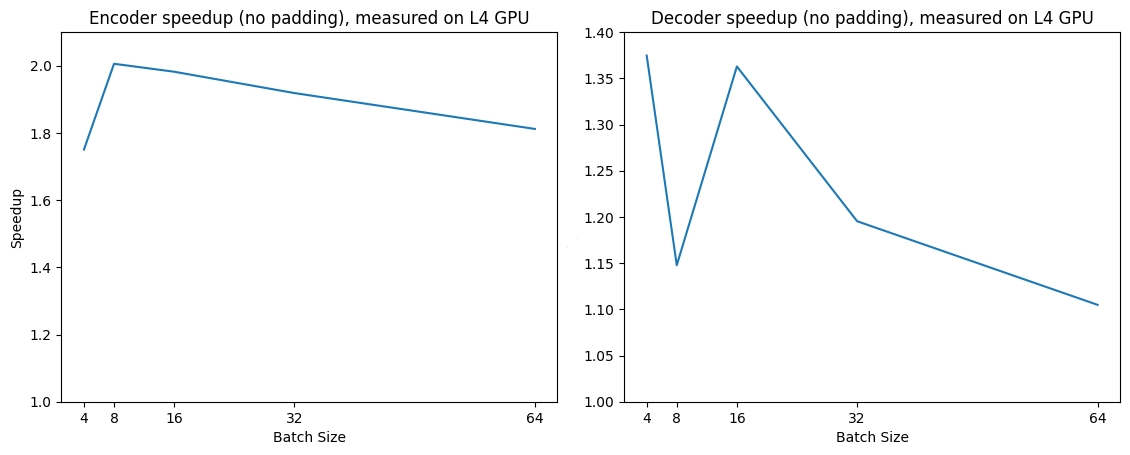

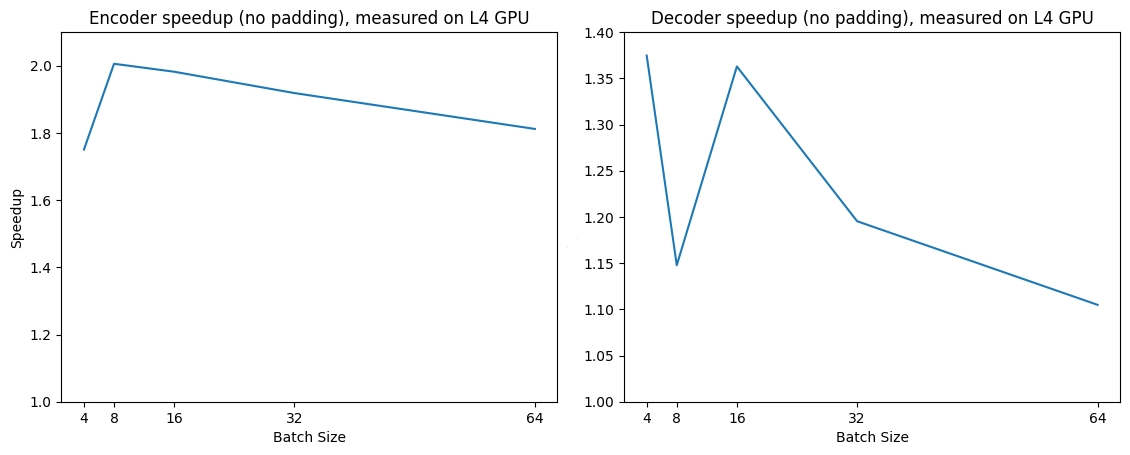

@@ -163,3 +163,21 @@ Below is an expected speedup diagram that compares pure inference time between t

+

+## Using Scaled Dot Product Attention (SDPA)

+PyTorch includes a native scaled dot-product attention (SDPA) operator as part of `torch.nn.functional`. This function

+encompasses several implementations that can be applied depending on the inputs and the hardware in use. See the

+[official documentation](https://pytorch.org/docs/stable/generated/torch.nn.functional.scaled_dot_product_attention.html)

+or the [GPU Inference](https://huggingface.co/docs/transformers/main/en/perf_infer_gpu_one#pytorch-scaled-dot-product-attention)

+page for more information.

+

+SDPA is used by default for `torch>=2.1.1` when an implementation is available, but you may also set

+`attn_implementation="sdpa"` in `from_pretrained()` to explicitly request SDPA to be used.

+

+```python

+from transformers import M2M100ForConditionalGeneration

+model = M2M100ForConditionalGeneration.from_pretrained("facebook/m2m100_418M", torch_dtype=torch.float16, attn_implementation="sdpa")

+...

+```

+

+For the best speedups, we recommend loading the model in half-precision (e.g. `torch.float16` or `torch.bfloat16`).

\ No newline at end of file

diff --git a/docs/source/en/model_doc/nllb.md b/docs/source/en/model_doc/nllb.md

index f06749cc76a67d..abdff7445aa34c 100644

--- a/docs/source/en/model_doc/nllb.md

+++ b/docs/source/en/model_doc/nllb.md

@@ -188,3 +188,21 @@ Below is an expected speedup diagram that compares pure inference time between t

+

+## Using Scaled Dot Product Attention (SDPA)

+PyTorch includes a native scaled dot-product attention (SDPA) operator as part of `torch.nn.functional`. This function

+encompasses several implementations that can be applied depending on the inputs and the hardware in use. See the

+[official documentation](https://pytorch.org/docs/stable/generated/torch.nn.functional.scaled_dot_product_attention.html)

+or the [GPU Inference](https://huggingface.co/docs/transformers/main/en/perf_infer_gpu_one#pytorch-scaled-dot-product-attention)

+page for more information.

+

+SDPA is used by default for `torch>=2.1.1` when an implementation is available, but you may also set

+`attn_implementation="sdpa"` in `from_pretrained()` to explicitly request SDPA to be used.

+

+```python

+from transformers import AutoModelForSeq2SeqLM

+model = AutoModelForSeq2SeqLM.from_pretrained("facebook/nllb-200-distilled-600M", torch_dtype=torch.float16, attn_implementation="sdpa")

+...

+```

+

+For the best speedups, we recommend loading the model in half-precision (e.g. `torch.float16` or `torch.bfloat16`).

\ No newline at end of file

diff --git a/docs/source/en/perf_infer_gpu_one.md b/docs/source/en/perf_infer_gpu_one.md

index 193af845da659d..7a0a3e4d250ed4 100644

--- a/docs/source/en/perf_infer_gpu_one.md

+++ b/docs/source/en/perf_infer_gpu_one.md

@@ -233,11 +233,13 @@ For now, Transformers supports SDPA inference and training for the following arc

* [Jamba](https://huggingface.co/docs/transformers/model_doc/jamba#transformers.JambaModel)

* [Llama](https://huggingface.co/docs/transformers/model_doc/llama#transformers.LlamaModel)

* [LLaVA-Onevision](https://huggingface.co/docs/transformers/model_doc/llava_onevision)

+* [M2M100](https://huggingface.co/docs/transformers/model_doc/m2m_100#transformers.M2M100Model)

* [Mimi](https://huggingface.co/docs/transformers/model_doc/mimi)

* [Mistral](https://huggingface.co/docs/transformers/model_doc/mistral#transformers.MistralModel)

* [Mixtral](https://huggingface.co/docs/transformers/model_doc/mixtral#transformers.MixtralModel)

* [Musicgen](https://huggingface.co/docs/transformers/model_doc/musicgen#transformers.MusicgenModel)

* [MusicGen Melody](https://huggingface.co/docs/transformers/model_doc/musicgen_melody#transformers.MusicgenMelodyModel)

+* [NLLB](https://huggingface.co/docs/transformers/model_doc/nllb)

* [OLMo](https://huggingface.co/docs/transformers/model_doc/olmo#transformers.OlmoModel)

* [OLMoE](https://huggingface.co/docs/transformers/model_doc/olmoe#transformers.OlmoeModel)

* [PaliGemma](https://huggingface.co/docs/transformers/model_doc/paligemma#transformers.PaliGemmaForConditionalGeneration)

diff --git a/src/transformers/models/m2m_100/modeling_m2m_100.py b/src/transformers/models/m2m_100/modeling_m2m_100.py

index 86a4378da29cdb..77386f0ff1ad9d 100755

--- a/src/transformers/models/m2m_100/modeling_m2m_100.py

+++ b/src/transformers/models/m2m_100/modeling_m2m_100.py

@@ -24,7 +24,12 @@

from ...activations import ACT2FN

from ...generation import GenerationMixin

from ...integrations.deepspeed import is_deepspeed_zero3_enabled

-from ...modeling_attn_mask_utils import _prepare_4d_attention_mask, _prepare_4d_causal_attention_mask

+from ...modeling_attn_mask_utils import (

+ _prepare_4d_attention_mask,

+ _prepare_4d_attention_mask_for_sdpa,

+ _prepare_4d_causal_attention_mask,

+ _prepare_4d_causal_attention_mask_for_sdpa,

+)

from ...modeling_outputs import (

BaseModelOutput,

BaseModelOutputWithPastAndCrossAttentions,

@@ -428,6 +433,113 @@ def forward(

return attn_output, None, past_key_value

+# Copied from transformers.models.bart.modeling_bart.BartSdpaAttention with Bart->M2M100

+class M2M100SdpaAttention(M2M100Attention):

+ def forward(

+ self,

+ hidden_states: torch.Tensor,

+ key_value_states: Optional[torch.Tensor] = None,

+ past_key_value: Optional[Tuple[torch.Tensor]] = None,

+ attention_mask: Optional[torch.Tensor] = None,

+ layer_head_mask: Optional[torch.Tensor] = None,

+ output_attentions: bool = False,

+ ) -> Tuple[torch.Tensor, Optional[torch.Tensor], Optional[Tuple[torch.Tensor]]]:

+ """Input shape: Batch x Time x Channel"""

+ if output_attentions or layer_head_mask is not None:

+ # TODO: Improve this warning with e.g. `model.config._attn_implementation = "manual"` once this is implemented.

+ logger.warning_once(

+ "M2M100Model is using M2M100SdpaAttention, but `torch.nn.functional.scaled_dot_product_attention` does not support `output_attentions=True` or `layer_head_mask` not None. Falling back to the manual attention"

+ ' implementation, but specifying the manual implementation will be required from Transformers version v5.0.0 onwards. This warning can be removed using the argument `attn_implementation="eager"` when loading the model.'

+ )

+ return super().forward(

+ hidden_states,

+ key_value_states=key_value_states,

+ past_key_value=past_key_value,

+ attention_mask=attention_mask,

+ layer_head_mask=layer_head_mask,

+ output_attentions=output_attentions,

+ )

+

+ # if key_value_states are provided this layer is used as a cross-attention layer

+ # for the decoder

+ is_cross_attention = key_value_states is not None

+

+ bsz, tgt_len, _ = hidden_states.size()

+

+ # get query proj

+ query_states = self.q_proj(hidden_states)

+ # get key, value proj

+ # `past_key_value[0].shape[2] == key_value_states.shape[1]`

+ # is checking that the `sequence_length` of the `past_key_value` is the same as

+ # the provided `key_value_states` to support prefix tuning

+ if (

+ is_cross_attention

+ and past_key_value is not None

+ and past_key_value[0].shape[2] == key_value_states.shape[1]

+ ):

+ # reuse k,v, cross_attentions

+ key_states = past_key_value[0]

+ value_states = past_key_value[1]

+ elif is_cross_attention:

+ # cross_attentions

+ key_states = self._shape(self.k_proj(key_value_states), -1, bsz)

+ value_states = self._shape(self.v_proj(key_value_states), -1, bsz)

+ elif past_key_value is not None:

+ # reuse k, v, self_attention

+ key_states = self._shape(self.k_proj(hidden_states), -1, bsz)

+ value_states = self._shape(self.v_proj(hidden_states), -1, bsz)

+ key_states = torch.cat([past_key_value[0], key_states], dim=2)

+ value_states = torch.cat([past_key_value[1], value_states], dim=2)

+ else:

+ # self_attention

+ key_states = self._shape(self.k_proj(hidden_states), -1, bsz)

+ value_states = self._shape(self.v_proj(hidden_states), -1, bsz)

+

+ if self.is_decoder:

+ # if cross_attention save Tuple(torch.Tensor, torch.Tensor) of all cross attention key/value_states.

+ # Further calls to cross_attention layer can then reuse all cross-attention

+ # key/value_states (first "if" case)

+ # if uni-directional self-attention (decoder) save Tuple(torch.Tensor, torch.Tensor) of

+ # all previous decoder key/value_states. Further calls to uni-directional self-attention

+ # can concat previous decoder key/value_states to current projected key/value_states (third "elif" case)

+ # if encoder bi-directional self-attention `past_key_value` is always `None`

+ past_key_value = (key_states, value_states)

+

+ query_states = self._shape(query_states, tgt_len, bsz)

+

+ # We dispatch to SDPA's Flash Attention or Efficient kernels via this `is_causal` if statement instead of an inline conditional assignment

+ # in SDPA to support both torch.compile's dynamic shapes and full graph options. An inline conditional prevents dynamic shapes from compiling.

+ # The tgt_len > 1 is necessary to match with AttentionMaskConverter.to_causal_4d that does not create a causal mask in case tgt_len == 1.

+ is_causal = True if self.is_causal and attention_mask is None and tgt_len > 1 else False

+

+ # NOTE: SDPA with memory-efficient backend is currently (torch==2.1.2) bugged when using non-contiguous inputs and a custom attn_mask,

+ # but we are fine here as `_shape` do call `.contiguous()`. Reference: https://github.com/pytorch/pytorch/issues/112577

+ attn_output = torch.nn.functional.scaled_dot_product_attention(

+ query_states,

+ key_states,

+ value_states,

+ attn_mask=attention_mask,

+ dropout_p=self.dropout if self.training else 0.0,

+ is_causal=is_causal,

+ )

+

+ if attn_output.size() != (bsz, self.num_heads, tgt_len, self.head_dim):

+ raise ValueError(

+ f"`attn_output` should be of size {(bsz, self.num_heads, tgt_len, self.head_dim)}, but is"

+ f" {attn_output.size()}"

+ )

+

+ attn_output = attn_output.transpose(1, 2)

+

+ # Use the `embed_dim` from the config (stored in the class) rather than `hidden_state` because `attn_output` can be

+ # partitioned across GPUs when using tensor-parallelism.

+ attn_output = attn_output.reshape(bsz, tgt_len, self.embed_dim)

+

+ attn_output = self.out_proj(attn_output)

+

+ return attn_output, None, past_key_value

+

+

# Copied from transformers.models.mbart.modeling_mbart.MBartEncoderLayer with MBart->M2M100, MBART->M2M100

class M2M100EncoderLayer(nn.Module):

def __init__(self, config: M2M100Config):

@@ -502,6 +614,7 @@ def forward(

M2M100_ATTENTION_CLASSES = {

"eager": M2M100Attention,

"flash_attention_2": M2M100FlashAttention2,

+ "sdpa": M2M100SdpaAttention,

}

@@ -632,6 +745,7 @@ class M2M100PreTrainedModel(PreTrainedModel):

supports_gradient_checkpointing = True

_no_split_modules = ["M2M100EncoderLayer", "M2M100DecoderLayer"]

_supports_flash_attn_2 = True

+ _supports_sdpa = True

def _init_weights(self, module):

std = self.config.init_std

@@ -805,6 +919,7 @@ def __init__(self, config: M2M100Config, embed_tokens: Optional[nn.Embedding] =

self.layers = nn.ModuleList([M2M100EncoderLayer(config) for _ in range(config.encoder_layers)])

self.layer_norm = nn.LayerNorm(config.d_model)

self._use_flash_attention_2 = config._attn_implementation == "flash_attention_2"

+ self._use_sdpa = config._attn_implementation == "sdpa"

self.gradient_checkpointing = False

# Initialize weights and apply final processing

@@ -887,6 +1002,11 @@ def forward(

if attention_mask is not None:

if self._use_flash_attention_2:

attention_mask = attention_mask if 0 in attention_mask else None

+ elif self._use_sdpa and head_mask is None and not output_attentions:

+ # output_attentions=True & head_mask can not be supported when using SDPA, fall back to

+ # the manual implementation that requires a 4D causal mask in all cases.

+ # [bsz, seq_len] -> [bsz, 1, tgt_seq_len, src_seq_len]

+ attention_mask = _prepare_4d_attention_mask_for_sdpa(attention_mask, inputs_embeds.dtype)

else:

# [bsz, seq_len] -> [bsz, 1, tgt_seq_len, src_seq_len]

attention_mask = _prepare_4d_attention_mask(attention_mask, inputs_embeds.dtype)

@@ -981,6 +1101,7 @@ def __init__(self, config: M2M100Config, embed_tokens: Optional[nn.Embedding] =

)

self.layers = nn.ModuleList([M2M100DecoderLayer(config) for _ in range(config.decoder_layers)])

self._use_flash_attention_2 = config._attn_implementation == "flash_attention_2"

+ self._use_sdpa = config._attn_implementation == "sdpa"

self.layer_norm = nn.LayerNorm(config.d_model)

self.gradient_checkpointing = False

@@ -1094,6 +1215,15 @@ def forward(

if self._use_flash_attention_2:

# 2d mask is passed through the layers

combined_attention_mask = attention_mask if (attention_mask is not None and 0 in attention_mask) else None

+ elif self._use_sdpa and not output_attentions and cross_attn_head_mask is None:

+ # output_attentions=True & cross_attn_head_mask can not be supported when using SDPA, and we fall back on

+ # the manual implementation that requires a 4D causal mask in all cases.

+ combined_attention_mask = _prepare_4d_causal_attention_mask_for_sdpa(

+ attention_mask,

+ input_shape,

+ inputs_embeds,

+ past_key_values_length,

+ )

else:

# 4d mask is passed through the layers

combined_attention_mask = _prepare_4d_causal_attention_mask(

@@ -1104,6 +1234,15 @@ def forward(

if encoder_hidden_states is not None and encoder_attention_mask is not None:

if self._use_flash_attention_2:

encoder_attention_mask = encoder_attention_mask if 0 in encoder_attention_mask else None

+ elif self._use_sdpa and cross_attn_head_mask is None and not output_attentions:

+ # output_attentions=True & cross_attn_head_mask can not be supported when using SDPA, and we fall back on

+ # the manual implementation that requires a 4D causal mask in all cases.

+ # [bsz, seq_len] -> [bsz, 1, tgt_seq_len, src_seq_len]

+ encoder_attention_mask = _prepare_4d_attention_mask_for_sdpa(

+ encoder_attention_mask,

+ inputs_embeds.dtype,

+ tgt_len=input_shape[-1],

+ )

else:

# [bsz, seq_len] -> [bsz, 1, tgt_seq_len, src_seq_len]

encoder_attention_mask = _prepare_4d_attention_mask(