You signed in with another tab or window. Reload to refresh your session.You signed out in another tab or window. Reload to refresh your session.You switched accounts on another tab or window. Reload to refresh your session.Dismiss alert

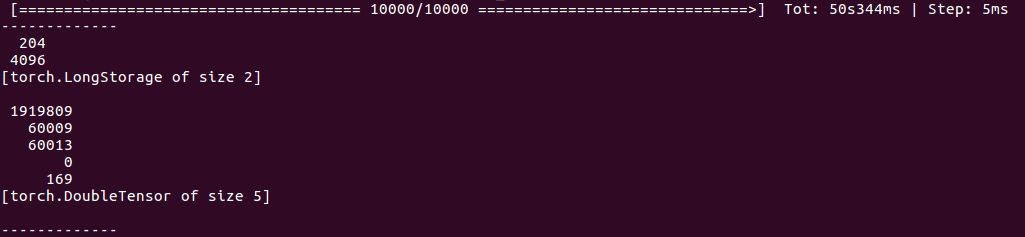

I have a tensor with dimension: 204x4096 where 204 is the number of samples while 4096 is feature dimension. After 10000 iterations of clustering by kmeans, it returns a counts like this: [1919809, 60009, 60013, 0, 169]. This is definitely wrong since I only have 204 samples. The screen shot is below:

And here is my code, could anyone please tell where I did wrong?

I have a tensor with dimension: 204x4096 where 204 is the number of samples while 4096 is feature dimension. After 10000 iterations of clustering by kmeans, it returns a counts like this: [1919809, 60009, 60013, 0, 169]. This is definitely wrong since I only have 204 samples. The screen shot is below:

And here is my code, could anyone please tell where I did wrong?

The text was updated successfully, but these errors were encountered: