-

Notifications

You must be signed in to change notification settings - Fork 525

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

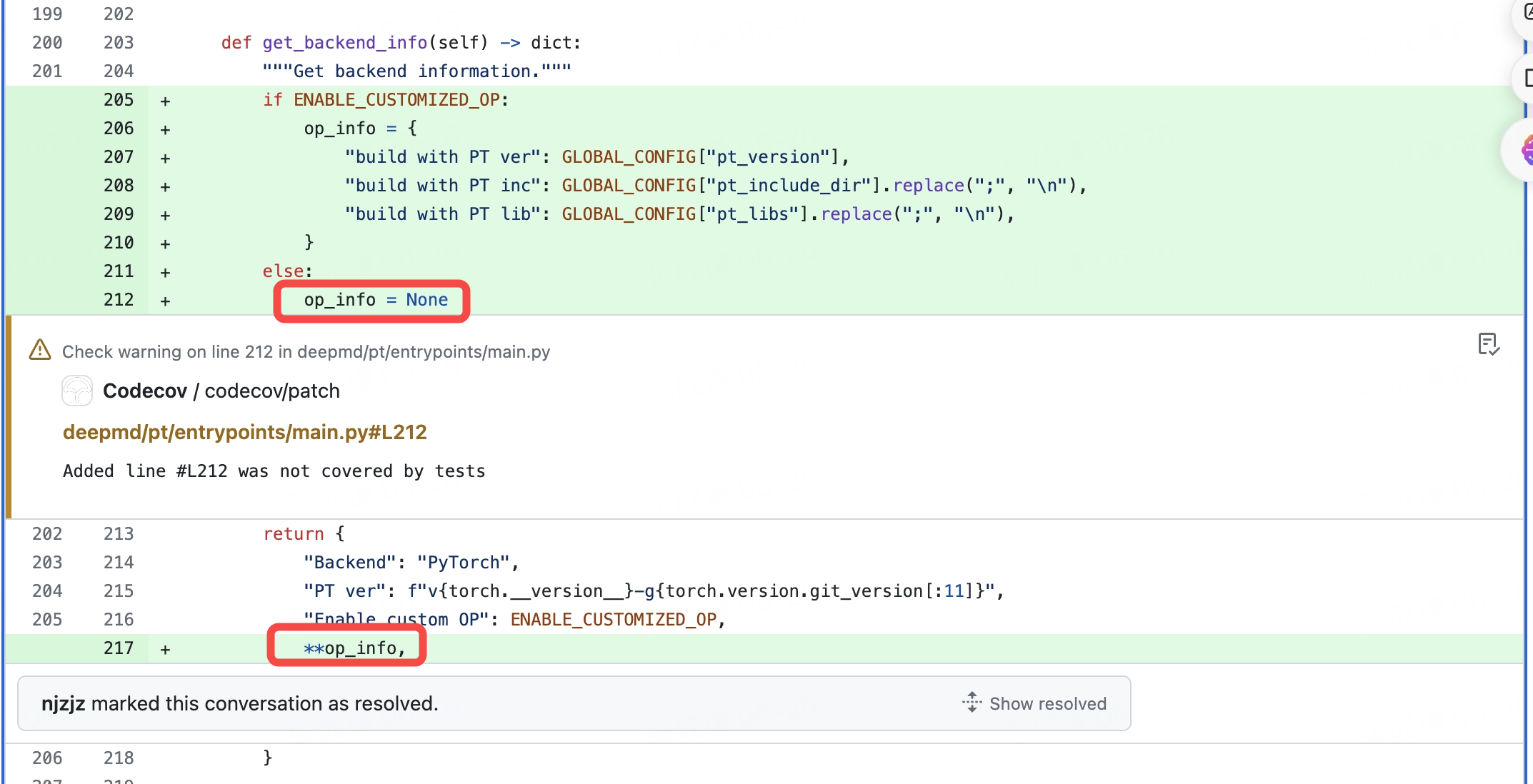

Fix(load_library): When ENABLE_CUSTOMIZED_OP = False, change op_info = None to op_info = {}

#3912

Conversation

WalkthroughWalkthroughThe recent changes involve a minor alteration in the initialization of Changes

Sequence Diagram(s)Since the changes involve only the modification of the initialization of a variable within a method, no new features or complex control flows have been introduced. Therefore, a sequence diagram is not necessary for this update. Recent review detailsConfiguration used: CodeRabbit UI Files selected for processing (1)

Additional context usedRuff

Additional comments not posted (1)

Thank you for using CodeRabbit. We offer it for free to the OSS community and would appreciate your support in helping us grow. If you find it useful, would you consider giving us a shout-out on your favorite social media? TipsChatThere are 3 ways to chat with CodeRabbit:

Note: Be mindful of the bot's finite context window. It's strongly recommended to break down tasks such as reading entire modules into smaller chunks. For a focused discussion, use review comments to chat about specific files and their changes, instead of using the PR comments. CodeRabbit Commands (invoked as PR comments)

Additionally, you can add CodeRabbit Configration File (

|

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Actionable comments posted: 0

Outside diff range and nitpick comments (2)

deepmd/pt/entrypoints/main.py (2)

Line range hint

117-117: Remove unused variable.The variable

fis opened but never used, which could lead to unnecessary resource allocation.- with h5py.File(stat_file_path_single, "w") as f: + h5py.File(stat_file_path_single, "w").close()

Line range hint

374-377: Simplify assignment using ternary operator.Using a ternary operator here will make the code more concise and readable.

- if not isinstance(args, argparse.Namespace): - FLAGS = parse_args(args=args) - else: - FLAGS = args + FLAGS = parse_args(args=args) if not isinstance(args, argparse.Namespace) else args

Codecov ReportAttention: Patch coverage is

Additional details and impacted files@@ Coverage Diff @@

## devel #3912 +/- ##

=======================================

Coverage 82.72% 82.72%

=======================================

Files 519 519

Lines 50515 50515

Branches 3015 3015

=======================================

Hits 41790 41790

Misses 7788 7788

Partials 937 937 ☔ View full report in Codecov by Sentry. |

…fo = None` to `op_info = {}` (deepmodeling#3912)

Solve issue deepmodeling#3911

When I run `examples/water/dpa2` using `dp --pt train input_torch.json`.

An error occurs:

To get the best performance, it is recommended to adjust the number of

threads by setting the environment variables OMP_NUM_THREADS,

DP_INTRA_OP_PARALLELISM_THREADS, and DP_INTER_OP_PARALLELISM_THREADS.

See https://deepmd.rtfd.io/parallelism/ for more information.

[2024-06-26 07:53:43,325] DEEPMD INFO DeepMD version:

2.2.0b1.dev892+g73dab63f.d20240612

[2024-06-26 07:53:43,325] DEEPMD INFO Configuration path:

input_torch.json

Traceback (most recent call last):

File "/home/data/zhangcq/conda_env/deepmd-pt-1026/bin/dp", line 8, in

<module>

sys.exit(main())

File "/home/data/zcq/deepmd-source/deepmd-kit/deepmd/main.py", line 842,

in main

deepmd_main(args)

File

"/home/data/zhangcq/conda_env/deepmd-pt-1026/lib/python3.10/site-packages/torch/distributed/elastic/multiprocessing/errors/__init__.py",

line 346, in wrapper

return f(*args, **kwargs)

File

"/home/data/zcq/deepmd-source/deepmd-kit/deepmd/pt/entrypoints/main.py",

line 384, in main

train(FLAGS)

File

"/home/data/zcq/deepmd-source/deepmd-kit/deepmd/pt/entrypoints/main.py",

line 223, in train

SummaryPrinter()()

File "/home/data/zcq/deepmd-source/deepmd-kit/deepmd/utils/summary.py",

line 62, in __call__

build_info.update(self.get_backend_info())

File

"/home/data/zcq/deepmd-source/deepmd-kit/deepmd/pt/entrypoints/main.py",

line 213, in get_backend_info

return {

TypeError: 'NoneType' object is not a mapping

This bug is made by PR deepmodeling#3895

When `op_info` is `None`, `{**op_info}` will raise error. Changing

`op_info = None` to `op_info = {}` will solve the issue.

<!-- This is an auto-generated comment: release notes by coderabbit.ai

-->

## Summary by CodeRabbit

- **Bug Fixes**

- Improved system stability by initializing `op_info` as an empty

dictionary instead of `None`, preventing potential runtime errors.

<!-- end of auto-generated comment: release notes by coderabbit.ai -->

Solve issue #3911

When I run

examples/water/dpa2usingdp --pt train input_torch.json. An error occurs:To get the best performance, it is recommended to adjust the number of threads by setting the environment variables OMP_NUM_THREADS, DP_INTRA_OP_PARALLELISM_THREADS, and DP_INTER_OP_PARALLELISM_THREADS. See https://deepmd.rtfd.io/parallelism/ for more information.

[2024-06-26 07:53:43,325] DEEPMD INFO DeepMD version: 2.2.0b1.dev892+g73dab63f.d20240612

[2024-06-26 07:53:43,325] DEEPMD INFO Configuration path: input_torch.json

Traceback (most recent call last):

File "/home/data/zhangcq/conda_env/deepmd-pt-1026/bin/dp", line 8, in

sys.exit(main())

File "/home/data/zcq/deepmd-source/deepmd-kit/deepmd/main.py", line 842, in main

deepmd_main(args)

File "/home/data/zhangcq/conda_env/deepmd-pt-1026/lib/python3.10/site-packages/torch/distributed/elastic/multiprocessing/errors/init.py", line 346, in wrapper

return f(*args, **kwargs)

File "/home/data/zcq/deepmd-source/deepmd-kit/deepmd/pt/entrypoints/main.py", line 384, in main

train(FLAGS)

File "/home/data/zcq/deepmd-source/deepmd-kit/deepmd/pt/entrypoints/main.py", line 223, in train

SummaryPrinter()()

File "/home/data/zcq/deepmd-source/deepmd-kit/deepmd/utils/summary.py", line 62, in call

build_info.update(self.get_backend_info())

File "/home/data/zcq/deepmd-source/deepmd-kit/deepmd/pt/entrypoints/main.py", line 213, in get_backend_info

return {

TypeError: 'NoneType' object is not a mapping

This bug is made by PR #3895

When

op_infoisNone,{**op_info}will raise error. Changingop_info = Nonetoop_info = {}will solve the issue.Summary by CodeRabbit

op_infoas an empty dictionary instead ofNone, preventing potential runtime errors.