-

Notifications

You must be signed in to change notification settings - Fork 51

Commit

This commit does not belong to any branch on this repository, and may belong to a fork outside of the repository.

- Loading branch information

1 parent

fd860a8

commit 7081d9d

Showing

11 changed files

with

172 additions

and

35 deletions.

There are no files selected for viewing

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| Original file line number | Diff line number | Diff line change |

|---|---|---|

| @@ -0,0 +1,9 @@ | ||

| # How to generate an Estuary Flow Refresh Token | ||

|

|

||

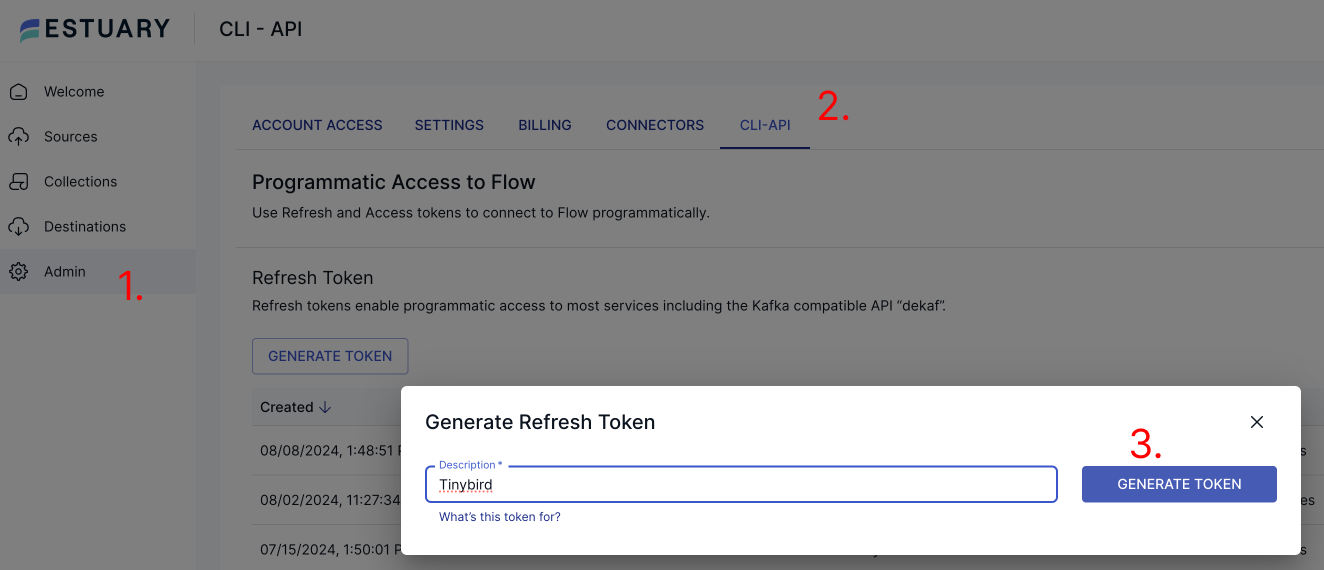

| To generate a Refresh Token, navigate to the Admin page, then head over to the CLI-API section. | ||

|

|

||

| Press the Generate token button to bing up the modal where you are able to give your token a name. | ||

| Choose a name that you will be able to use to identify which service your token is meant to give access to. | ||

|

|

||

|  | ||

|

|

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| Original file line number | Diff line number | Diff line change |

|---|---|---|

| @@ -0,0 +1,67 @@ | ||

| # Bytewax | ||

|

|

||

| This guide demonstrates how to use Estuary Flow to stream data to Bytewax using the Kafka-compatible Dekaf API. | ||

|

|

||

| [Bytewax](https://bytewax.io/) is a Python framework for building scalable dataflow applications, designed for | ||

| high-throughput, low-latency data processing tasks. | ||

|

|

||

| ## Connecting Estuary Flow to Bytewax | ||

|

|

||

| 1. [Generate a refresh token](/guides/how_to_generate_refresh_token) for the Bytewax connection from the Estuary Admin | ||

| Dashboard. | ||

|

|

||

| 2. Install Bytewax and the Kafka Python client: | ||

|

|

||

| ``` | ||

| pip install bytewax kafka-python | ||

| ``` | ||

|

|

||

| 3. Create a Python script for your Bytewax dataflow, using the following template: | ||

|

|

||

| ```python | ||

| import json | ||

| from datetime import timedelta | ||

| from bytewax.dataflow import Dataflow | ||

| from bytewax.inputs import KafkaInputConfig | ||

| from bytewax.outputs import StdOutputConfig | ||

| from bytewax.window import TumblingWindowConfig, SystemClockConfig | ||

|

|

||

| # Estuary Flow Dekaf configuration | ||

| KAFKA_BOOTSTRAP_SERVERS = "dekaf.estuary.dev:9092" | ||

| KAFKA_TOPIC = "/full/nameof/your/collection" | ||

|

|

||

| # Parse incoming messages | ||

| def parse_message(msg): | ||

| data = json.loads(msg) | ||

| # Process your data here | ||

| return data | ||

|

|

||

| # Define your dataflow | ||

| src = KafkaSource(brokers=KAFKA_BOOTSTRAP_SERVERS, topics=[KAFKA_TOPIC], add_config={ | ||

| "security.protocol": "SASL_SSL", | ||

| "sasl.mechanism": "PLAIN", | ||

| "sasl.username": "{}", | ||

| "sasl.password": os.getenv("DEKAF_TOKEN"), | ||

| }) | ||

|

|

||

| flow = Dataflow() | ||

| flow.input("input", src) | ||

| flow.input("input", KafkaInputConfig(KAFKA_BOOTSTRAP_SERVERS, KAFKA_TOPIC)) | ||

| flow.map(parse_message) | ||

| # Add more processing steps as needed | ||

| flow.output("output", StdOutputConfig()) | ||

|

|

||

| if __name__ == "__main__": | ||

| from bytewax.execution import run_main | ||

| run_main(flow) | ||

| ``` | ||

|

|

||

| 4. Replace `"/full/nameof/your/collection"` with your actual collection name from Estuary Flow. | ||

|

|

||

| 5. Run your Bytewax dataflow: | ||

|

|

||

| ``` | ||

| python your_dataflow_script.py | ||

| ``` | ||

|

|

||

| 6. Your Bytewax dataflow is now processing data from Estuary Flow in real-time. |

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| Original file line number | Diff line number | Diff line change |

|---|---|---|

| @@ -0,0 +1,40 @@ | ||

| # Imply Polaris | ||

|

|

||

| This guide demonstrates how to use Estuary Flow to stream data to Imply Polaris using the Kafka-compatible Dekaf API. | ||

|

|

||

| [Imply Polaris](https://imply.io/polaris) is a fully managed, cloud-native Database-as-a-Service (DBaaS) built on Apache | ||

| Druid, designed for real-time analytics on streaming and batch data. | ||

|

|

||

| ## Connecting Estuary Flow to Imply Polaris | ||

|

|

||

| 1. [Generate a refresh token](/guides/how_to_generate_refresh_token) for the Imply Polaris connection from the Estuary | ||

| Admin Dashboard. | ||

|

|

||

| 2. Log in to your Imply Polaris account and navigate to your project. | ||

|

|

||

| 3. In the left sidebar, click on "Tables" and then "Create Table". | ||

|

|

||

| 4. Choose "Kafka" as the input source for your new table. | ||

|

|

||

| 5. In the Kafka configuration section, enter the following details: | ||

|

|

||

| - **Bootstrap Servers**: `dekaf.estuary.dev:9092` | ||

| - **Topic**: Your Estuary Flow collection name (e.g., `/my-organization/my-collection`) | ||

| - **Security Protocol**: `SASL_SSL` | ||

| - **SASL Mechanism**: `PLAIN` | ||

| - **SASL Username**: `{}` | ||

| - **SASL Password**: `Your generated Estuary Access Token` | ||

|

|

||

| 6. For the "Input Format", select "avro". | ||

|

|

||

| 7. Configure the Schema Registry settings: | ||

| - **Schema Registry URL**: `https://dekaf.estuary.dev` | ||

| - **Schema Registry Username**: `{}` (same as SASL Username) | ||

| - **Schema Registry Password**: `The same Estuary Access Token as above` | ||

|

|

||

| 8. In the "Schema" section, Imply Polaris should automatically detect the schema from your Avro data. Review and adjust | ||

| the column definitions as needed. | ||

|

|

||

| 9. Review and finalize your table configuration, then click "Create Table". | ||

|

|

||

| 10. Your Imply Polaris table should now start ingesting data from Estuary Flow. |

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| Original file line number | Diff line number | Diff line change |

|---|---|---|

| @@ -0,0 +1,40 @@ | ||

| # SingleStore (Cloud) | ||

|

|

||

| This guide demonstrates how to use Estuary Flow to stream data to SingleStore using the Kafka-compatible Dekaf API. | ||

|

|

||

| [SingleStore](https://www.singlestore.com/) is a distributed SQL database designed for data-intensive applications, | ||

| offering high performance for both transactional and analytical workloads. | ||

|

|

||

| ## Connecting Estuary Flow to SingleStore | ||

|

|

||

| 1. [Generate a refresh token](/guides/how_to_generate_refresh_token) for the SingleStore connection from the Estuary | ||

| Admin Dashboard. | ||

|

|

||

| 2. In the SingleStore Cloud Portal, navigate to the SQL Editor section of the Data Studio. | ||

|

|

||

| 3. Execute the following script to create a table and an ingestion pipeline to hydrate it. | ||

|

|

||

| This example will ingest data from the demo wikipedia collection in Estuary Flow. | ||

|

|

||

| ```sql | ||

| CREATE TABLE test_table (id NUMERIC, server_name VARCHAR(255), title VARCHAR(255)); | ||

|

|

||

| CREATE PIPELINE test AS | ||

| LOAD DATA KAFKA "dekaf.estuary.dev:9092/demo/wikipedia/recentchange-sampled" | ||

| CONFIG '{ | ||

| "security.protocol":"SASL_SSL", | ||

| "sasl.mechanism":"PLAIN", | ||

| "sasl.username":"{}", | ||

| "broker.address.family": "v4", | ||

| "schema.registry.username": "{}", | ||

| "fetch.wait.max.ms": "2000" | ||

| }' | ||

| CREDENTIALS '{ | ||

| "sasl.password": "ESTUARY_ACCESS_TOKEN", | ||

| "schema.registry.password": "ESTUARY_ACCESS_TOKEN" | ||

| }' | ||

| INTO table test_table | ||

| FORMAT AVRO SCHEMA REGISTRY 'https://dekaf.estuary.dev' | ||

| ( id <- id, server_name <- server_name, title <- title ); | ||

| ``` | ||

| 4. Your pipeline should now start ingesting data from Estuary Flow into SingleStore. |

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters