📘Documentation | 🛠️Installation | 👀Model Zoo | 🆕Update News | 🤔Reporting Issues

English | 简体中文

MMYOLO is an open source toolbox for YOLO series algorithms based on PyTorch and MMDetection. It is a part of the OpenMMLab project.

The master branch works with PyTorch 1.6+.

Major features

-

Unified and convenient benchmark

MMYOLO unifies the implementation of modules in various YOLO algorithms and provides a unified benchmark. Users can compare and analyze in a fair and convenient way.

-

Rich and detailed documentation

MMYOLO provides rich documentation for getting started, model deployment, advanced usages, and algorithm analysis, making it easy for users at different levels to get started and make extensions quickly.

-

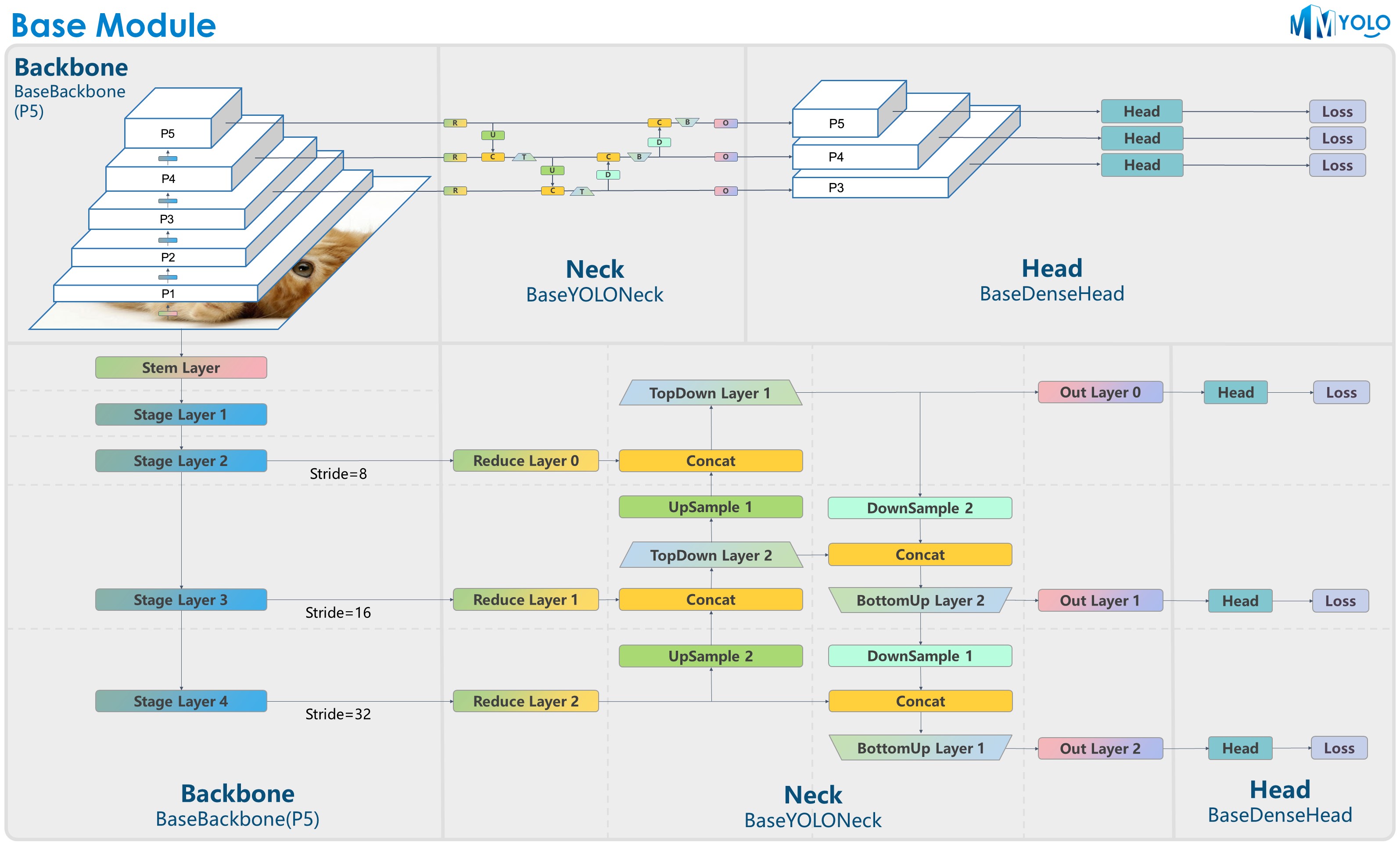

Modular Design

MMYOLO decomposes the framework into different components where users can easily customize a model by combining different modules with various training and testing strategies.

The figure is contributed by RangeKing@GitHub, thank you very much!

The figure is contributed by RangeKing@GitHub, thank you very much!

v0.1.1 was released on 29/9/2022:

- Support RTMDet.

- Support for backbone customization plugins and update How-to documentation.

For release history and update details, please refer to changelog.

MMYOLO relies on PyTorch, MMCV, MMEngine, and MMDetection. Below are quick steps for installation. Please refer to the Install Guide for more detailed instructions.

conda create -n open-mmlab python=3.8 pytorch==1.10.1 torchvision==0.11.2 cudatoolkit=11.3 -c pytorch -y

conda activate open-mmlab

pip install openmim

mim install mmengine

mim install "mmcv>=2.0.0rc1,<2.1.0"

mim install "mmdet>=3.0.0rc1,<3.1.0"

git clone https://github.com/open-mmlab/mmyolo.git

cd mmyolo

# Install albumentations

pip install -r requirements/albu.txt

# Install MMYOLO

mim install -v -e .MMYOLO is based on MMDetection and adopts the same code structure and design approach. To get better use of this, please read MMDetection Overview for the first understanding of MMDetection.

The usage of MMYOLO is almost identical to MMDetection and all tutorials are straightforward to use, you can also learn about MMDetection User Guide and Advanced Guide.

For different parts from MMDetection, we have also prepared user guides and advanced guides, please read our documentation.

-

User Guides

-

Algorithm description

-

Advanced Guides

Results and models are available in the model zoo.

| Backbones | Necks | Loss | Common |

|

|

|

|

Please refer to the FAQ for frequently asked questions.

We appreciate all contributions to improving MMYOLO. Ongoing projects can be found in our GitHub Projects. Welcome community users to participate in these projects. Please refer to CONTRIBUTING.md for the contributing guideline.

MMYOLO is an open source project that is contributed by researchers and engineers from various colleges and companies. We appreciate all the contributors who implement their methods or add new features, as well as users who give valuable feedback. We wish that the toolbox and benchmark could serve the growing research community by providing a flexible toolkit to reimplement existing methods and develop their own new detectors.

If you find this project useful in your research, please consider cite:

@misc{mmyolo2022,

title={{MMYOLO: OpenMMLab YOLO} series toolbox and benchmark},

author={MMYOLO Contributors},

howpublished = {\url{https://github.com/open-mmlab/mmyolo}},

year={2022}

}This project is released under the GPL 3.0 license.

- MMEngine: OpenMMLab foundational library for training deep learning models.

- MMCV: OpenMMLab foundational library for computer vision.

- MIM: MIM installs OpenMMLab packages.

- MMClassification: OpenMMLab image classification toolbox and benchmark.

- MMDetection: OpenMMLab detection toolbox and benchmark.

- MMDetection3D: OpenMMLab's next-generation platform for general 3D object detection.

- MMRotate: OpenMMLab rotated object detection toolbox and benchmark.

- MMYOLO: OpenMMLab YOLO series toolbox and benchmark.

- MMSegmentation: OpenMMLab semantic segmentation toolbox and benchmark.

- MMOCR: OpenMMLab text detection, recognition, and understanding toolbox.

- MMPose: OpenMMLab pose estimation toolbox and benchmark.

- MMHuman3D: OpenMMLab 3D human parametric model toolbox and benchmark.

- MMSelfSup: OpenMMLab self-supervised learning toolbox and benchmark.

- MMRazor: OpenMMLab model compression toolbox and benchmark.

- MMFewShot: OpenMMLab fewshot learning toolbox and benchmark.

- MMAction2: OpenMMLab's next-generation action understanding toolbox and benchmark.

- MMTracking: OpenMMLab video perception toolbox and benchmark.

- MMFlow: OpenMMLab optical flow toolbox and benchmark.

- MMEditing: OpenMMLab image and video editing toolbox.

- MMGeneration: OpenMMLab image and video generative models toolbox.

- MMDeploy: OpenMMLab model deployment framework.