For this project, you will work with the Reacher environment.

In this environment, a double-jointed arm can move to target locations. A reward of +0.1 is provided for each step that the agent's hand is in the goal location. Thus, the goal of your agent is to maintain its position at the target location for as many time steps as possible.

The observation space consists of 33 variables corresponding to position, rotation, velocity, and angular velocities of the arm. Each action is a vector with four numbers, corresponding to torque applicable to two joints. Every entry in the action vector should be a number between -1 and 1.

For this project, we will provide you with two separate versions of the Unity environment:

- The first version contains a single agent.

- The second version contains 20 identical agents, each with its own copy of the environment.

The second version is useful for algorithms like PPO, A3C, and D4PG that use multiple (non-interacting, parallel) copies of the same agent to distribute the task of gathering experience.

Note that your project submission need only solve one of the two versions of the environment.

The task is episodic, and in order to solve the environment, your agent must get an average score of +30 over 100 consecutive episodes.

The barrier for solving the second version of the environment is slightly different, to take into account the presence of many agents. In particular, your agents must get an average score of +30 (over 100 consecutive episodes, and over all agents). Specifically,

- After each episode, we add up the rewards that each agent received (without discounting), to get a score for each agent. This yields 20 (potentially different) scores. We then take the average of these 20 scores.

- This yields an average score for each episode (where the average is over all 20 agents).

The environment is considered solved, when the average (over 100 episodes) of those average scores is at least +30.

-

Download the environment from one of the links below. You need only select the environment that matches your operating system:

-

Version 1: One (1) Agent

- Linux: click here

- Mac OSX: click here

- Windows (32-bit): click here

- Windows (64-bit): click here

-

Version 2: Twenty (20) Agents

- Linux: click here

- Mac OSX: click here

- Windows (32-bit): click here

- Windows (64-bit): click here

(For Windows users) Check out this link if you need help with determining if your computer is running a 32-bit version or 64-bit version of the Windows operating system.

(For AWS) If you'd like to train the agent on AWS (and have not enabled a virtual screen), then please use this link (version 1) or this link (version 2) to obtain the "headless" version of the environment. You will not be able to watch the agent without enabling a virtual screen, but you will be able to train the agent. (To watch the agent, you should follow the instructions to enable a virtual screen, and then download the environment for the Linux operating system above.)

-

-

Place the file in the DRLND GitHub repository, in the

TD3_continuous_control/folder, and unzip (or decompress) the file. -

Rename the file to Reacher

-

Install environment

- pip install matplotlib

- pip install mlagents

- pip install numpy

- pip install tensorboardx

- pip install tensorboard

-

In the

TD3_continuous_control/folder run command:python train.py --eval_load_best=Trueorpython train.py --eval_load_best=True --slow_and_pretty=Truefor a slow representation

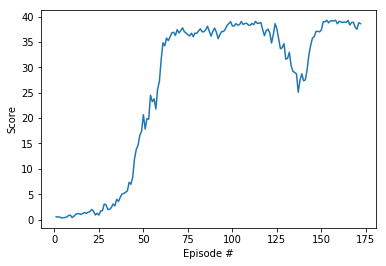

As an example, consider the plot below, where we have plotted the average score (over all 20 agents) obtained with each episode.

Plot of average scores (over all agents) with each episode.

The environment is considered solved, when the average (over 100 episodes) of those average scores is at least +30. In the case of the plot above, the environment was solved at episode 63, since the average of the average scores from episodes 64 to 163 (inclusive) was greater than +30.

The following list is more of notes to myself. It contains further improvement i want to implement and test out:

- N-step

- Priority experience replay (Replay buffer)

- Look at the priority function in this paper https://arxiv.org/pdf/1707.08817.pdf

- Improved Critic with better model (maybe fit own rainbow implementation)

Profiling the script to improve running speed.Auto generate logs for each train_run.Optimize hyper-parameters

These are not in order, but from experience, leave optimizing hyper-parameters to after modification. Reason is, the parameters vary a lot depending on the modifications.

Through this project I have found the information on the following sites to be a very big help in form of easy explaination and simple follow through of the TD3 algorithm, a big thanks for all the good explaination they have given us for free: