Optimize consistency checks for deleted files #189

Merged

Add this suggestion to a batch that can be applied as a single commit.

This suggestion is invalid because no changes were made to the code.

Suggestions cannot be applied while the pull request is closed.

Suggestions cannot be applied while viewing a subset of changes.

Only one suggestion per line can be applied in a batch.

Add this suggestion to a batch that can be applied as a single commit.

Applying suggestions on deleted lines is not supported.

You must change the existing code in this line in order to create a valid suggestion.

Outdated suggestions cannot be applied.

This suggestion has been applied or marked resolved.

Suggestions cannot be applied from pending reviews.

Suggestions cannot be applied on multi-line comments.

Suggestions cannot be applied while the pull request is queued to merge.

Suggestion cannot be applied right now. Please check back later.

This PR optimizes consistency checks performance for the case with a lot of deleted files.

When doing

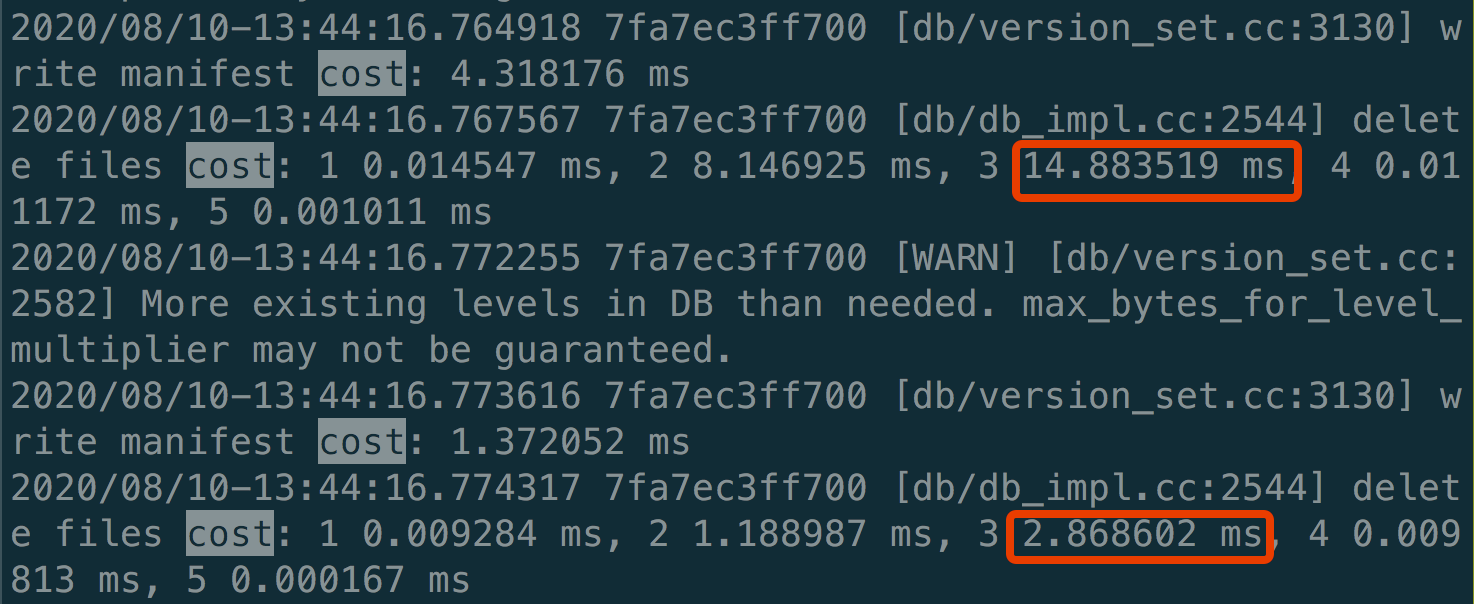

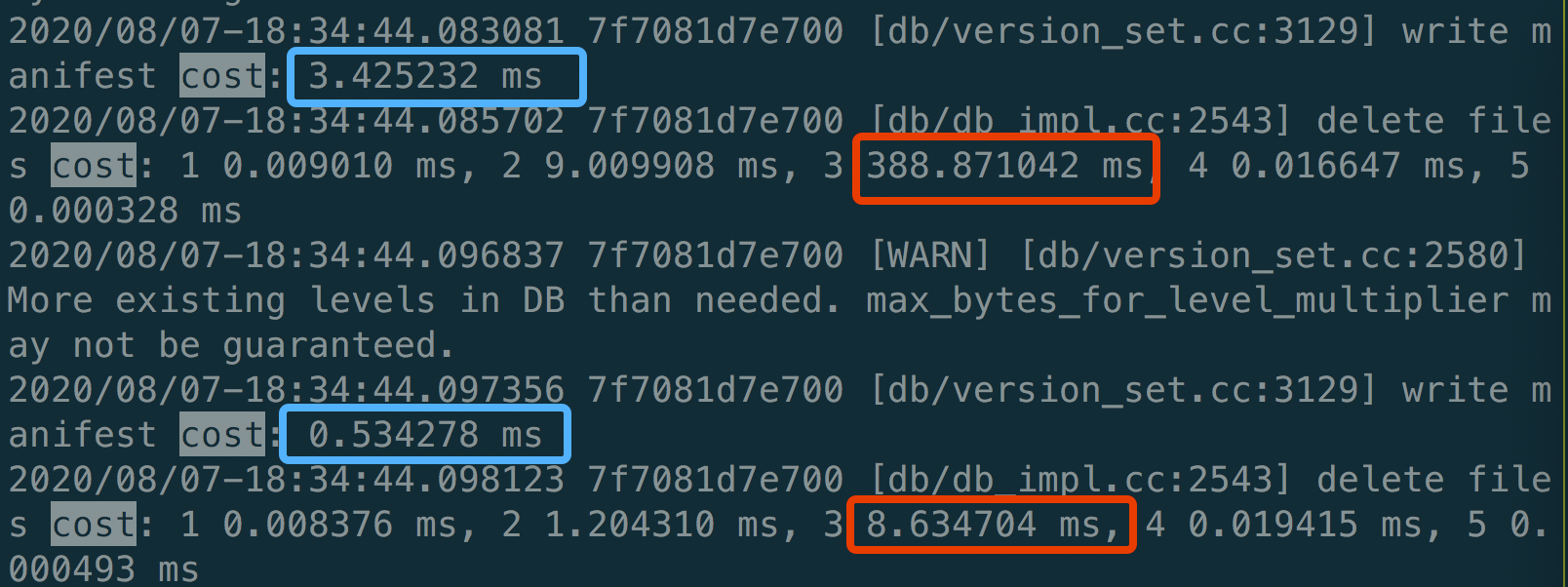

DeleteFilesInRangewithforce_consistency_checkson for a large range which spans about 16.5K SST files, we found it spent most of the time inLogAndApply(the time in red rectangle).In

CheckConsistencyForDeletes, it traverses the whole LSM to check if the file existed in the previous version for every deleted file. In the case of a lot of deleted files such asDeleteFilesInRange, it would waste too much time on this operation. Even worse, it is done with db mutex held, so it may greatly affect the foreground write performance.After making the check in batch with only one round of traverse of the LSM, the time is greatly reduced.