Highlights

- An advanced and powerful inpainting algorithm named PowerPaint is released in our repository. Click to View

New Features & Improvements

- [Release] Post release for v1.1.0 by @liuwenran in open-mmlab#2043

- [CodeCamp2023-645]Add dreambooth new cfg by @YanxingLiu in open-mmlab#2042

- [Enhance] add new config for base dir by @liuwenran in open-mmlab#2053

- [Enhance] support using from_pretrained for instance_crop by @zengyh1900 in open-mmlab#2066

- [Enhance] update support for latest diffusers with lora by @zengyh1900 in open-mmlab#2067

- [Feature] PowerPaint by @zhuang2002 in open-mmlab#2076

- [Enhance] powerpaint improvement by @liuwenran in open-mmlab#2078

- [Enhance] Improve powerpaint by @liuwenran in open-mmlab#2080

- [Enhance] add outpainting to gradio_PowerPaint.py by @zhuang2002 in open-mmlab#2084

- [MMSIG] Add new configuration files for StyleGAN2 by @xiaomile in open-mmlab#2057

- [MMSIG] [Doc] Update data_preprocessor.md by @jinxianwei in open-mmlab#2055

- [Enhance] Enhance PowerPaint by @zhuang2002 in open-mmlab#2093

Bug Fixes

- [Fix] Update README.md by @eze1376 in open-mmlab#2048

- [Fix] Fix test tokenizer by @liuwenran in open-mmlab#2050

- [Fix] fix readthedocs building by @liuwenran in open-mmlab#2052

- [Fix] --local-rank for PyTorch >= 2.0.0 by @youqingxiaozhua in open-mmlab#2051

- [Fix] animatediff download from openxlab by @JianxinDong in open-mmlab#2061

- [Fix] fix best practice by @liuwenran in open-mmlab#2063

- [Fix] try import expand mask from transformers by @zengyh1900 in open-mmlab#2064

- [Fix] Update diffusers to v0.23.0 by @liuwenran in open-mmlab#2069

- [Fix] add openxlab link to powerpaint by @liuwenran in open-mmlab#2082

- [Fix] Update swinir_x2s48w8d6e180_8xb4-lr2e-4-500k_div2k.py, use MultiValLoop. by @ashutoshsingh0223 in open-mmlab#2085

- [Fix] Fix a test expression that has a logical short circuit. by @munahaf in open-mmlab#2046

- [Fix] Powerpaint to load safetensors by @sdbds in open-mmlab#2088

New Contributors

- @eze1376 made their first contribution in open-mmlab#2048

- @youqingxiaozhua made their first contribution in open-mmlab#2051

- @JianxinDong made their first contribution in open-mmlab#2061

- @zhuang2002 made their first contribution in open-mmlab#2076

- @ashutoshsingh0223 made their first contribution in open-mmlab#2085

- @jinxianwei made their first contribution in open-mmlab#2055

- @munahaf made their first contribution in open-mmlab#2046

- @sdbds made their first contribution in open-mmlab#2088

Full Changelog: https://github.com/open-mmlab/mmagic/compare/v1.1.0...v1.2.0

Highlights

In this new version of MMagic, we have added support for the following five new algorithms.

- Support ViCo, a new SD personalization method. Click to View

- Support AnimateDiff, a popular text2animation method. Click to View

- Support SDXL. Click to View

- Support DragGAN implementation with MMagic. Click to View

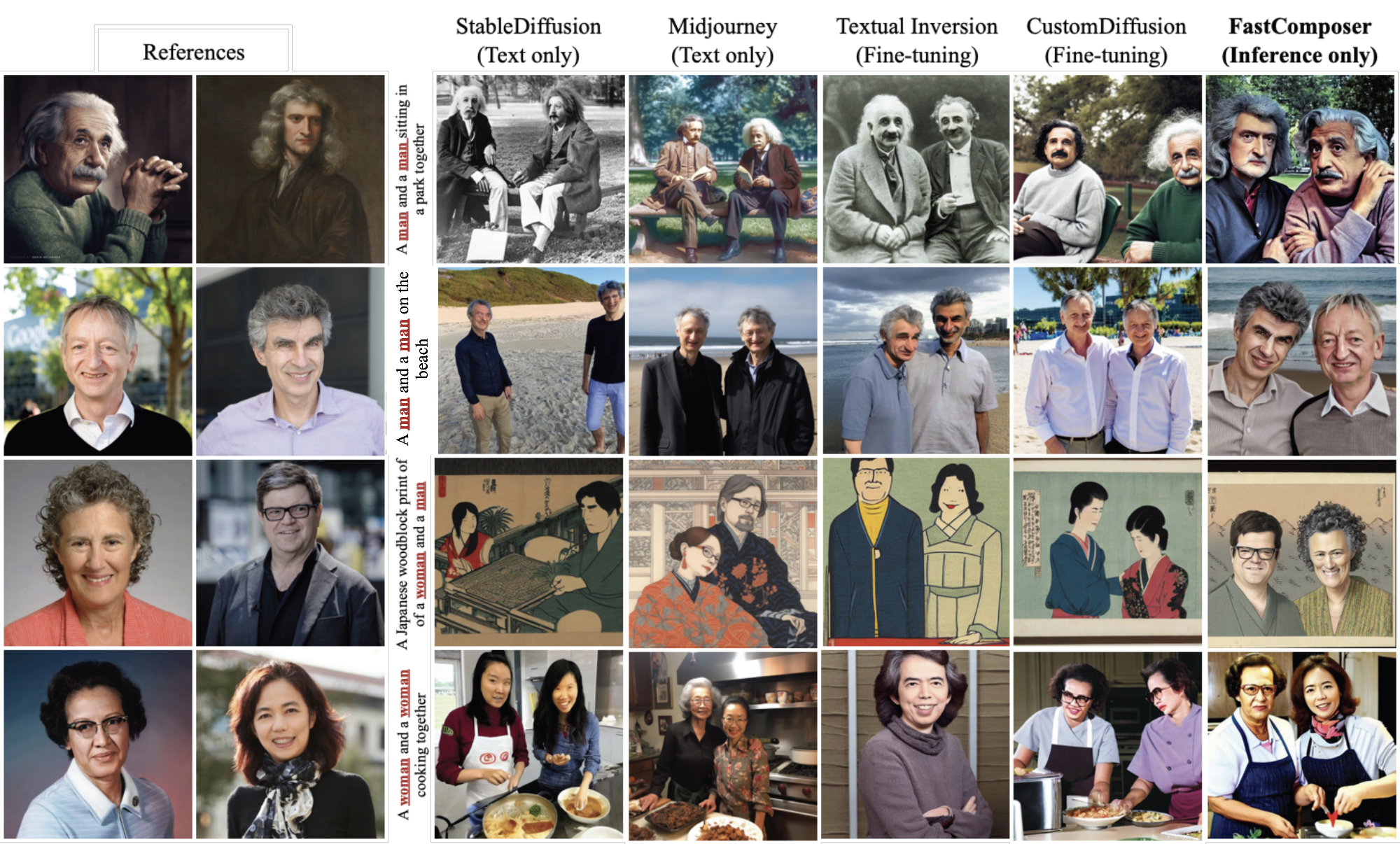

- Support for FastComposer. Click to View

New Features & Improvements

- [Feature] Support inference with diffusers pipeline, sd_xl first. by @liuwenran in open-mmlab#2023

- [Enhance] add negative prompt for sd inferencer by @liuwenran in open-mmlab#2021

- [Enhance] Update flake8 checking config in setup.cfg by @LeoXing1996 in open-mmlab#2007

- [Enhance] Add ‘config_name' as a supplement to the 'model_setting' by @liuwenran in open-mmlab#2027

- [Enhance] faster test by @okotaku in open-mmlab#2034

- [Enhance] Add OpenXLab Badge by @ZhaoQiiii in open-mmlab#2037

CodeCamp Contributions

- [CodeCamp2023-643] Add new configs of BigGAN by @limafang in open-mmlab#2003

- [CodeCamp2023-648] MMagic new config GuidedDiffusion by @ooooo-create in open-mmlab#2005

- [CodeCamp2023-649] MMagic new config Instance Colorization by @ooooo-create in open-mmlab#2010

- [CodeCamp2023-652] MMagic new config StyleGAN3 by @hhy150 in open-mmlab#2018

- [CodeCamp2023-653] Add new configs of Real BasicVSR by @RangeKing in open-mmlab#2030

Bug Fixes

- [Fix] Fix best practice and back to contents on mainpage, add new models to model zoo by @liuwenran in open-mmlab#2001

- [Fix] Check CI error and remove main stream gpu test by @liuwenran in open-mmlab#2013

- [Fix] Check circle ci memory by @liuwenran in open-mmlab#2016

- [Fix] remove code and fix clip loss ut test by @liuwenran in open-mmlab#2017

- [Fix] mock infer in diffusers pipeline inferencer ut. by @liuwenran in open-mmlab#2026

- [Fix] Fix bug caused by merging draggan by @liuwenran in open-mmlab#2029

- [Fix] Update QRcode by @crazysteeaam in open-mmlab#2009

- [Fix] Replace the download links in README with OpenXLab version by @FerryHuang in open-mmlab#2038

- [Fix] Increase docstring coverage by @liuwenran in open-mmlab#2039

New Contributors

- @limafang made their first contribution in open-mmlab#2003

- @ooooo-create made their first contribution in open-mmlab#2005

- @hhy150 made their first contribution in open-mmlab#2018

- @ZhaoQiiii made their first contribution in open-mmlab#2037

- @ElliotQi made their first contribution in open-mmlab#1980

- @Beaconsyh08 made their first contribution in open-mmlab#2012

Full Changelog: https://github.com/open-mmlab/mmagic/compare/v1.0.2...v1.0.3

Highlights

1. More detailed documentation

Thank you to the community contributors for helping us improve the documentation. We have improved many documents, including both Chinese and English versions. Please refer to the documentation for more details.

2. New algorithms

- Support Prompt-to-prompt, DDIM Inversion and Null-text Inversion. Click to View.

From right to left: origin image, DDIM inversion, Null-text inversion

Prompt-to-prompt Editing

- Support Textual Inversion. Click to view.

- Support Attention Injection for more stable video generation with controlnet. Click to view.

- Support Stable Diffusion Inpainting. Click to view.

New Features & Improvements

- [Enhancement] Support noise offset in stable diffusion training by @LeoXing1996 in open-mmlab#1880

- [Community] Support Glide Upsampler by @Taited in open-mmlab#1663

- [Enhance] support controlnet inferencer by @Z-Fran in open-mmlab#1891

- [Feature] support Albumentations augmentation transformations and pipeline by @Z-Fran in open-mmlab#1894

- [Feature] Add Attention Injection for unet by @liuwenran in open-mmlab#1895

- [Enhance] update benchmark scripts by @Z-Fran in open-mmlab#1907

- [Enhancement] update mmagic docs by @crazysteeaam in open-mmlab#1920

- [Enhancement] Support Prompt-to-prompt, ddim inversion and null-text inversion by @FerryHuang in open-mmlab#1908

- [CodeCamp2023-302] Support MMagic visualization and write a user guide by @aptsunny in open-mmlab#1939

- [Feature] Support Textual Inversion by @LeoXing1996 in open-mmlab#1822

- [Feature] Support stable diffusion inpaint by @Taited in open-mmlab#1976

- [Enhancement] Adopt

BaseModulefor some models by @LeoXing1996 in open-mmlab#1543 - [MMSIG]支持 DeblurGANv2 inference by @xiaomile in open-mmlab#1955

- [CodeCamp2023-647] Add new configs of EG3D by @RangeKing in open-mmlab#1985

Bug Fixes

- Fix dtype error in StableDiffusion and DreamBooth training by @LeoXing1996 in open-mmlab#1879

- Fix gui VideoSlider bug by @Z-Fran in open-mmlab#1885

- Fix init_model and glide demo by @Z-Fran in open-mmlab#1888

- Fix InstColorization bug when dim=3 by @Z-Fran in open-mmlab#1901

- Fix sd and controlnet fp16 bugs by @Z-Fran in open-mmlab#1914

- Fix num_images_per_prompt in controlnet by @LeoXing1996 in open-mmlab#1936

- Revise metafile for sd-inpainting to fix inferencer init by @LeoXing1996 in open-mmlab#1995

New Contributors

- @wyyang23 made their first contribution in open-mmlab#1886

- @yehuixie made their first contribution in open-mmlab#1912

- @crazysteeaam made their first contribution in open-mmlab#1920

- @BUPT-NingXinyu made their first contribution in open-mmlab#1921

- @zhjunqin made their first contribution in open-mmlab#1918

- @xuesheng1031 made their first contribution in open-mmlab#1923

- @wslgqq277g made their first contribution in open-mmlab#1934

- @LYMDLUT made their first contribution in open-mmlab#1933

- @RangeKing made their first contribution in open-mmlab#1930

- @xin-li-67 made their first contribution in open-mmlab#1932

- @chg0901 made their first contribution in open-mmlab#1931

- @aptsunny made their first contribution in open-mmlab#1939

- @YanxingLiu made their first contribution in open-mmlab#1943

- @tackhwa made their first contribution in open-mmlab#1937

- @Geo-Chou made their first contribution in open-mmlab#1940

- @qsun1 made their first contribution in open-mmlab#1956

- @ththth888 made their first contribution in open-mmlab#1961

- @sijiua made their first contribution in open-mmlab#1967

- @MING-ZCH made their first contribution in open-mmlab#1982

- @AllYoung made their first contribution in open-mmlab#1996

New Features & Improvements

- Support tomesd for StableDiffusion speed-up. #1801

- Support all inpainting/matting/image restoration models inferencer. #1833, #1873

- Support animated drawings at projects. #1837

- Support Style-Based Global Appearance Flow for Virtual Try-On at projects. #1786

- Support tokenizer wrapper and support EmbeddingLayerWithFixe. #1846

Bug Fixes

- Fix install requirements. #1819

- Fix inst-colorization PackInputs. #1828, #1827

- Fix inferencer in pip-install. #1875

New Contributors

- @XDUWQ made their first contribution in open-mmlab#1830

- @FerryHuang made their first contribution in open-mmlab#1786

- @bobo0810 made their first contribution in open-mmlab#1851

- @jercylew made their first contribution in open-mmlab#1874

We are excited to announce the release of MMagic v1.0.0 that inherits from MMEditing and MMGeneration.

Since its inception, MMEditing has been the preferred algorithm library for many super-resolution, editing, and generation tasks, helping research teams win more than 10 top international competitions and supporting over 100 GitHub ecosystem projects. After iterative updates with OpenMMLab 2.0 framework and merged with MMGeneration, MMEditing has become a powerful tool that supports low-level algorithms based on both GAN and CNN.

Today, MMEditing embraces Generative AI and transforms into a more advanced and comprehensive AIGC toolkit: MMagic (Multimodal Advanced, Generative, and Intelligent Creation).

In MMagic, we have supported 53+ models in multiple tasks such as fine-tuning for stable diffusion, text-to-image, image and video restoration, super-resolution, editing and generation. With excellent training and experiment management support from MMEngine, MMagic will provide more agile and flexible experimental support for researchers and AIGC enthusiasts, and help you on your AIGC exploration journey. With MMagic, experience more magic in generation! Let's open a new era beyond editing together. More than Editing, Unlock the Magic!

Highlights

1. New Models

We support 11 new models in 4 new tasks.

- Text2Image / Diffusion

- ControlNet

- DreamBooth

- Stable Diffusion

- Disco Diffusion

- GLIDE

- Guided Diffusion

- 3D-aware Generation

- EG3D

- Image Restoration

- NAFNet

- Restormer

- SwinIR

- Image Colorization

- InstColorization

mmagic_introduction.mp4

2. Magic Diffusion Model

For the Diffusion Model, we provide the following "magic" :

-

Support image generation based on Stable Diffusion and Disco Diffusion.

-

Support Finetune methods such as Dreambooth and DreamBooth LoRA.

-

Support controllability in text-to-image generation using ControlNet.

-

Support acceleration and optimization strategies based on xFormers to improve training and inference efficiency.

-

Support video generation based on MultiFrame Render. MMagic supports the generation of long videos in various styles through ControlNet and MultiFrame Render. prompt keywords: a handsome man, silver hair, smiling, play basketball

caixukun_dancing_begin_fps10_frames_cat.mp4

prompt keywords: a girl, black hair, white pants, smiling, play basketball

caixukun_dancing_begin_fps10_frames_girl_boycat.mp4

prompt keywords: a handsome man

zhou_woyangni_fps10_frames_resized_cat.mp4

-

Support calling basic models and sampling strategies through DiffuserWrapper.

-

SAM + MMagic = Generate Anything! SAM (Segment Anything Model) is a popular model these days and can also provide more support for MMagic! If you want to create your own animation, you can go to OpenMMLab PlayGround.

huangbo_fps10_playground_party_fixloc_cat.mp4

3. Upgraded Framework

To improve your "spellcasting" efficiency, we have made the following adjustments to the "magic circuit":

- By using MMEngine and MMCV of OpenMMLab 2.0 framework, We decompose the editing framework into different modules and one can easily construct a customized editor framework by combining different modules. We can define the training process just like playing with Legos and provide rich components and strategies. In MMagic, you can complete controls on the training process with different levels of APIs.

- Support for 33+ algorithms accelerated by Pytorch 2.0.

- Refactor DataSample to support the combination and splitting of batch dimensions.

- Refactor DataPreprocessor and unify the data format for various tasks during training and inference.

- Refactor MultiValLoop and MultiTestLoop, supporting the evaluation of both generation-type metrics (e.g. FID) and reconstruction-type metrics (e.g. SSIM), and supporting the evaluation of multiple datasets at once.

- Support visualization on local files or using tensorboard and wandb.

New Features & Improvements

- Support 53+ algorithms, 232+ configs, 213+ checkpoints, 26+ loss functions, and 20+ metrics.

- Support controlnet animation and Gradio gui. Click to view.

- Support Inferencer and Demo using High-level Inference APIs. Click to view.

- Support Gradio gui of Inpainting inference. Click to view.

- Support qualitative comparison tools. Click to view.

- Enable projects. Click to view.

- Improve converters scripts and documents for datasets. Click to view.

Highlights

We are excited to announce the release of MMEditing 1.0.0rc7. This release supports 51+ models, 226+ configs and 212+ checkpoints in MMGeneration and MMEditing. We highlight the following new features

- Support DiffuserWrapper

- Support ControlNet (training and inference).

- Support PyTorch 2.0.

New Features & Improvements

- Support DiffuserWrapper. #1692

- Support ControlNet (training and inference). #1744

- Support PyTorch 2.0 (successfully compile 33+ models on 'inductor' backend). #1742

- Support Image Super-Resolution and Video Super-Resolution models inferencer. #1662, #1720

- Refactor tools/get_flops script. #1675

- Refactor dataset_converters and documents for datasets. #1690

- Move stylegan ops to MMCV. #1383

Bug Fixes

Contributors

A total of 8 developers contributed to this release. Thanks @LeoXing1996, @Z-Fran, @plyfager, @zengyh1900, @liuwenran, @ryanxingql, @HAOCHENYE, @VongolaWu

New Contributors

- @HAOCHENYE made their first contribution in open-mmlab#1712

Highlights

We are excited to announce the release of MMEditing 1.0.0rc6. This release supports 50+ models, 222+ configs and 209+ checkpoints in MMGeneration and MMEditing. We highlight the following new features

- Support Gradio gui of Inpainting inference.

- Support Colorization, Translationin and GAN models inferencer.

New Features & Improvements

- Refactor FileIO. #1572

- Refactor registry. #1621

- Refactor Random degradations. #1583

- Refactor DataSample, DataPreprocessor, Metric and Loop. #1656

- Use mmengine.basemodule instead of nn.module. #1491

- Refactor Main Page. #1609

- Support Gradio gui of Inpainting inference. #1601

- Support Colorization inferencer. #1588

- Support Translation models inferencer. #1650

- Support GAN models inferencer. #1653, #1659

- Print config tool. #1590

- Improve type hints. #1604

- Update Chinese documents of metrics and datasets. #1568, #1638

- Update Chinese documents of BigGAN and Disco-Diffusion. #1620

- Update Evaluation and README of Guided-Diffusion. #1547

Bug Fixes

- Fix the meaning of

momentumin EMA. #1581 - Fix output dtype of RandomNoise. #1585

- Fix pytorch2onnx tool. #1629

- Fix API documents. #1641, #1642

- Fix loading RealESRGAN EMA weights. #1647

- Fix arg passing bug of dataset_converters scripts. #1648

Contributors

A total of 17 developers contributed to this release. Thanks @plyfager, @LeoXing1996, @Z-Fran, @zengyh1900, @VongolaWu, @liuwenran, @austinmw, @dienachtderwelt, @liangzelong, @i-aki-y, @xiaomile, @Li-Qingyun, @vansin, @Luo-Yihang, @ydengbi, @ruoningYu, @triple-Mu

New Contributors

- @dienachtderwelt made their first contribution in open-mmlab#1578

- @i-aki-y made their first contribution in open-mmlab#1590

- @triple-Mu made their first contribution in open-mmlab#1618

- @Li-Qingyun made their first contribution in open-mmlab#1640

- @Luo-Yihang made their first contribution in open-mmlab#1648

- @ydengbi made their first contribution in open-mmlab#1557

Highlights

We are excited to announce the release of MMEditing 1.0.0rc5. This release supports 49+ models, 180+ configs and 177+ checkpoints in MMGeneration and MMEditing. We highlight the following new features

- Support Restormer.

- Support GLIDE.

- Support SwinIR.

- Support Stable Diffusion.

New Features & Improvements

- Disco notebook. (#1507)

- Revise test requirements and CI. (#1514)

- Recursive generate summary and docstring. (#1517)

- Enable projects. (#1526)

- Support mscoco dataset. (#1520)

- Improve Chinese documents. (#1532)

- Type hints. (#1481)

- Update download link of checkpoints. (#1554)

- Update deployment guide. (#1551)

Bug Fixes

- Fix documentation link checker. (#1522)

- Fix ssim first channel bug. (#1515)

- Fix extract_gt_data of realesrgan. (#1542)

- Fix model index. (#1559)

- Fix config path in disco-diffusion. (#1553)

- Fix text2image inferencer. (#1523)

Contributors

A total of 16 developers contributed to this release. Thanks @plyfager, @LeoXing1996, @Z-Fran, @zengyh1900, @VongolaWu, @liuwenran, @AlexZou14, @lvhan028, @xiaomile, @ldr426, @austin273, @whu-lee, @willaty, @curiosity654, @Zdafeng, @Taited

New Contributors

- @xiaomile made their first contribution in open-mmlab#1481

- @ldr426 made their first contribution in open-mmlab#1542

- @austin273 made their first contribution in open-mmlab#1553

- @whu-lee made their first contribution in open-mmlab#1539

- @willaty made their first contribution in open-mmlab#1541

- @curiosity654 made their first contribution in open-mmlab#1556

- @Zdafeng made their first contribution in open-mmlab#1476

- @Taited made their first contribution in open-mmlab#1534

Highlights

We are excited to announce the release of MMEditing 1.0.0rc4. This release supports 45+ models, 176+ configs and 175+ checkpoints in MMGeneration and MMEditing. We highlight the following new features

- Support High-level APIs.

- Support diffusion models.

- Support Text2Image Task.

- Support 3D-Aware Generation.

New Features & Improvements

- Refactor High-level APIs. (#1410)

- Support disco-diffusion text-2-image. (#1234, #1504)

- Support EG3D. (#1482, #1493, #1494, #1499)

- Support NAFNet model. (#1369)

Bug Fixes

- fix srgan train config. (#1441)

- fix cain config. (#1404)

- fix rdn and srcnn train configs. (#1392)

Contributors

A total of 14 developers contributed to this release. Thanks @plyfager, @LeoXing1996, @Z-Fran, @zengyh1900, @VongolaWu, @gaoyang07, @ChangjianZhao, @zxczrx123, @jackghosts, @liuwenran, @CCODING04, @RoseZhao929, @shaocongliu, @liangzelong.

New Contributors

- @gaoyang07 made their first contribution in open-mmlab#1372

- @ChangjianZhao made their first contribution in open-mmlab#1461

- @zxczrx123 made their first contribution in open-mmlab#1462

- @jackghosts made their first contribution in open-mmlab#1463

- @liuwenran made their first contribution in open-mmlab#1410

- @CCODING04 made their first contribution in open-mmlab#783

- @RoseZhao929 made their first contribution in open-mmlab#1474

- @shaocongliu made their first contribution in open-mmlab#1470

- @liangzelong made their first contribution in open-mmlab#1488

Highlights

We are excited to announce the release of MMEditing 1.0.0rc3. This release supports 43+ models, 170+ configs and 169+ checkpoints in MMGeneration and MMEditing. We highlight the following new features

- convert

mmdetandclipto optional requirements.

New Features & Improvements

- Support

try_importformmdet. (#1408) - Support

try_importforflip. (#1420) - Update

.gitignore. ($1416) - Set

real_featto cpu ininception_utils. (#1415) - Modify README and configs of StyleGAN2 and PEGAN (#1418)

- Improve the rendering of Docs-API (#1373)

Bug Fixes

- Revise config and pretrain model loading in ESRGAN (#1407)

- Revise config of LSGAN (#1409)

- Revise config of CAIN (#1404)

Contributors

A total of 5 developers contributed to this release. @Z-Fran, @zengyh1900, @plyfager, @LeoXing1996, @ruoningYu.

Highlights

We are excited to announce the release of MMEditing 1.0.0rc2. This release supports 43+ models, 170+ configs and 169+ checkpoints in MMGeneration and MMEditing. We highlight the following new features

- patch-based and slider-based image and video comparison viewer.

- image colorization.

New Features & Improvements

- Support qualitative comparison tools. (#1303)

- Support instance aware colorization. (#1370)

- Support multi-metrics with different sample-model. (#1171)

- Improve the implementation

- refactoring evaluation metrics. (#1164)

- Save gt images in PGGAN's

forward. (#1332) - Improve type and change default number of

preprocess_div2k_dataset.py. (#1380) - Support pixel value clip in visualizer. (#1365)

- Support SinGAN Dataset and SinGAN demo. (#1363)

- Avoid cast int and float in GenDataPreprocessor. (#1385)

- Improve the documentation

- Update a menu switcher. (#1162)

- Fix TTSR's README. (#1325)

Bug Fixes

- Fix PPL bug. (#1172)

- Fix RDN number of channels. (#1328)

- Fix types of exceptions in demos. (#1372)

- Fix realesrgan ema. (#1341)

- Improve the assertion to ensuer

GenerateFacialHeatmapasnp.float32. (#1310) - Fix sampling behavior of

unpaired_dataset.pyand urls in cyclegan's README. (#1308) - Fix vsr models in pytorch2onnx. (#1300)

- Fix incorrect settings in configs. (#1167,#1200,#1236,#1293,#1302,#1304,#1319,#1331,#1336,#1349,#1352,#1353,#1358,#1364,#1367,#1384,#1386,#1391,#1392,#1393)

New Contributors

- @gaoyang07 made their first contribution in open-mmlab#1372

Contributors

A total of 7 developers contributed to this release. Thanks @LeoXing1996, @Z-Fran, @zengyh1900, @plyfager, @ryanxingql, @ruoningYu, @gaoyang07.

MMEditing 1.0.0rc1 has merged MMGeneration 1.x.

- Support 42+ algorithms, 169+ configs and 168+ checkpoints.

- Support 26+ loss functions, 20+ metrics.

- Support tensorboard, wandb.

- Support unconditional GANs, conditional GANs, image2image translation and internal learning.

MMEditing 1.0.0rc0 is the first version of MMEditing 1.x, a part of the OpenMMLab 2.0 projects.

Built upon the new training engine, MMEditing 1.x unifies the interfaces of dataset, models, evaluation, and visualization.

And there are some BC-breaking changes. Please check the migration tutorial for more details.